[Editor’s Note: Mad Scientist Laboratory is pleased to publish the following post by repeat guest blogger Mr. Victor R. Morris, addressing the relationship of Artificial Intelligence (AI), Robotic and Autonomous systems (RAS), and Quantum Information Science (QIS) to Quantum Artificial Intelligence (QAI), and why we should pursue a parallel QAI strategy in order to predict alternative possibilities in a quantum multiverse. Prepare to have your consciousness expanded — Read on! (Note: Some of the embedded links in this post are best accessed using non-DoD networks.)]

Introduction

The U.S. defense industry routinely analyzes emerging and potentially disruptive technological trends influencing long-term strategic competition. This post describes the greater defense community as public and private sectors responsible for national security and associated interests abroad. Interstate competition has implications for global order and disorder, according to the 2018 National Defense Strategy summary.

The U.S. defense industry routinely analyzes emerging and potentially disruptive technological trends influencing long-term strategic competition. This post describes the greater defense community as public and private sectors responsible for national security and associated interests abroad. Interstate competition has implications for global order and disorder, according to the 2018 National Defense Strategy summary.

The three defense industry trends identified in this post are:

- Artificial Intelligence (AI),

- Robotic and Autonomous systems (RAS), and

- Quantum Information Science (QIS).

- Robotic and Autonomous systems (RAS), and

According to Paul Scharre‘s preface to Elsa Kania‘s paper on Battlefield Singularity, published by the Center for a New American Security (CNAS), “Artificial intelligence (AI) is fast heating up as a key area of strategic competition.” (N.B., both Mr. Scharre and Ms. Kania are proclaimed Mad Scientists whose works have previously graced this blog site). Furthermore, structured analysis identified interrelated aspects of these trends and the requirement for a multi-disciplinary strategy focused on Quantum Artificial Intelligence (QAI), anticipating the potential impact on global systems.

According to Paul Scharre‘s preface to Elsa Kania‘s paper on Battlefield Singularity, published by the Center for a New American Security (CNAS), “Artificial intelligence (AI) is fast heating up as a key area of strategic competition.” (N.B., both Mr. Scharre and Ms. Kania are proclaimed Mad Scientists whose works have previously graced this blog site). Furthermore, structured analysis identified interrelated aspects of these trends and the requirement for a multi-disciplinary strategy focused on Quantum Artificial Intelligence (QAI), anticipating the potential impact on global systems.

A number of countries have published AI Strategies during the past 21 months. China is routinely assessed as a leader in AI development and investment. Russia is finalizing its draft National AI Roadmap; the final version will be released mid-year 2019. The U.S. is scheduled to release its national strategy for artificial intelligence in early 2019. This intentionally or  unintentionally follows the National Strategic Overview for Quantum Information Science released in September 2018. Additionally, around the same time period, both the Massachusetts Institute of Technology (MIT) and U.S. Defense Advanced Research Projects Agency (DARPA) announced billion dollar investment plans for AI research and technologies. The U.S. Army’s Robotic and Autonomous Systems Strategy publication coincided with Canada’s AI strategy in March 2017. Canada was the first country to announce an AI strategy and future investment in the AI ecosystem. The European Union followed up on recent strategic developments by announcing a plan fostering AI development and employment last month.

unintentionally follows the National Strategic Overview for Quantum Information Science released in September 2018. Additionally, around the same time period, both the Massachusetts Institute of Technology (MIT) and U.S. Defense Advanced Research Projects Agency (DARPA) announced billion dollar investment plans for AI research and technologies. The U.S. Army’s Robotic and Autonomous Systems Strategy publication coincided with Canada’s AI strategy in March 2017. Canada was the first country to announce an AI strategy and future investment in the AI ecosystem. The European Union followed up on recent strategic developments by announcing a plan fostering AI development and employment last month.

First, this post argues that AI, QIS, and RAS are components of a greater QAI ecosystem underpinned by the scientific notion of information discussed in detail later. Information does not measure what is known, rather it measures the number of possible alternatives for something. Combining AI and quantum computing applications  potentially results in QAI, according to a variety of scientists and theorists in the field. Additionally, information is the nucleus or “quanta” of the entire QAI ecosystem. Understanding information is critical to understanding the natural world. Secondly, the post argues “keeping up with the Joneses” in AI is counterproductive and perpetuates misunderstanding of advancements and implications for the future.

potentially results in QAI, according to a variety of scientists and theorists in the field. Additionally, information is the nucleus or “quanta” of the entire QAI ecosystem. Understanding information is critical to understanding the natural world. Secondly, the post argues “keeping up with the Joneses” in AI is counterproductive and perpetuates misunderstanding of advancements and implications for the future.

The first section of this post briefly describes AI, Machine Learning (ML), RAS, QIS, and QAI, and their relationships with information. The second section describes theoretical interpretations of reality based on quantum mechanical properties.

Section 1 Overview

AI, sometimes called machine intelligence, includes the machine learning field enabling autonomous or independent functions and activity. QIS and computing are the next evolution of classical computing with implications for machine learning, reasoning, and autonomous systems behavior. As mentioned above, information is a fundamental consideration for all of these fields and the ability to perform parallel probabilistic tasks. “Probabilistic” refers to probabilities indirectly associated with randomness.

Artificial Intelligence (AI) and Machine Learning (ML)

AI involves computer systems performing tasks normally requiring human intelligence. In computer science, AI is the study of intelligent agents or autonomous entities perceiving and acting upon their environment. AI is intelligence exhibited by machines, enabled by machine learning algorithms in simpler terms. Algorithms are rule sets defining sequences of operations. ML is a field of AI and set of statistical techniques associated with machines performing intellectual, human tasks. ML includes deep learning and is critical to AI because it involves Artificial Neural Networks (ANN) like the human brain, enabling learning from large quantities of data to improve predictions and data driven decisions. ANNs are a framework for ML algorithms working together to process complex data sets.

Robotic and Autonomous Systems (RAS)  Robots are one type of AI entity, while others include cyber agents, decision aids, and virtual assistants. Amazon’s Alexa and Apple’s Siri are good examples of AI-enabled virtual assistants using ML to perform tasks. RAS are technologies granted autonomy or level of independence to execute tasks in a prescribed environment in a military context. RAS examples include both land and air systems like explosive ordnance disposal robots and unmanned aerial vehicles commonly referred to as “drones.” Autonomous behavior is designed by humans through a combination of sensors and advanced computing processes. Advanced computing involves both environmental navigation and software enabled decision-making. RAS independence is a progressive spectrum, ranging from remote control to full autonomy.

Robots are one type of AI entity, while others include cyber agents, decision aids, and virtual assistants. Amazon’s Alexa and Apple’s Siri are good examples of AI-enabled virtual assistants using ML to perform tasks. RAS are technologies granted autonomy or level of independence to execute tasks in a prescribed environment in a military context. RAS examples include both land and air systems like explosive ordnance disposal robots and unmanned aerial vehicles commonly referred to as “drones.” Autonomous behavior is designed by humans through a combination of sensors and advanced computing processes. Advanced computing involves both environmental navigation and software enabled decision-making. RAS independence is a progressive spectrum, ranging from remote control to full autonomy.

Quantum Information Science (QIS)

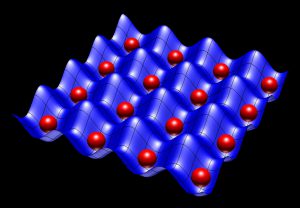

According to the September 2018 United States Government’s National Strategic Overview for Quantum Information Science report, “Quantum information science (QIS) applies the best understanding of the sub-atomic world—quantum theory—to generate new knowledge and technologies.” Quantum theory, also called quantum mechanics, describes the smallest finite quantities, or “quanta,” making up the quantum fields composing the universe. QIS includes the quantum computing field using quantum mechanical properties to advance information processing, transmission, and measurement. For example, quantum computation uses the quantum analog of a bit, called a quantum bit, existing in multiple states due to quantum superposition. Superposition allows quantum systems the ability to simultaneously occupy different quantum states. This fundamental principle means qubits are described as a linear combination of 0 and 1 (composition of basis states), and not solely 0 or 1 as in classical computing before measurement.

For example, quantum computation uses the quantum analog of a bit, called a quantum bit, existing in multiple states due to quantum superposition. Superposition allows quantum systems the ability to simultaneously occupy different quantum states. This fundamental principle means qubits are described as a linear combination of 0 and 1 (composition of basis states), and not solely 0 or 1 as in classical computing before measurement.

Quantum Artificial Intelligence (QAI)

This section does not attempt to explain AI and QIS intersections in detail. Both areas are so extensive that unifying concepts are difficult to understand.  This post sees QAI as a different element of the taxonomy and not a subset of classical AI. “Quantum physics is based on information theory and probability theory” according to Andreas Wichert, author of Principles of Quantum Artificial Intelligence. He presents both theories in his book, highlighting quantum physics’ relationship to AI through associative memory and Bayesian networks. Associate memory and Bayesian networks are applied later to QAI based on their access to information.

This post sees QAI as a different element of the taxonomy and not a subset of classical AI. “Quantum physics is based on information theory and probability theory” according to Andreas Wichert, author of Principles of Quantum Artificial Intelligence. He presents both theories in his book, highlighting quantum physics’ relationship to AI through associative memory and Bayesian networks. Associate memory and Bayesian networks are applied later to QAI based on their access to information.

Section 2 Overview

This section outlines interpretations of information and quantum theories and AI intersections. Information is a finite measurement of possible alternatives existing in the multiverse. Quantum computing has the potential for reversible or time-invertible deep learning and associative memory based on quantum entanglement and superposition. Quantum AI has the potential to test the multiverse theory, because QAI networks process, transmit, and measure information across space-time.

Information Theory

Information takes many forms that differ from one another, like natural language, symbols, acoustic speech, and pictures. The scientific notion of information is more precise. Information theory, proposed by Claude E. Shannon, studies the quantification, storage, and communication of information. Once again, Information does not measure what is known, it measures the number of possible alternatives for something.

Carlo Rovelli uses a dice example in his book Reality Is Not What It Seems: The Journey to Quantum Gravity to illustrate this point. If a dice is thrown, it can land on one of six sides. When we observe it fall on a number, we have an  amount of information where N=6 because the possible alternatives are six. Instead of “N” (number of alternatives), scientists measure information in terms of quantity deemed “S” after Shannon. Rovelli also states information is finite in nature based on quantum mechanical properties. New or “relevant” information cancels out “irrelevant” information in a physical system, therefore systems can always obtain new information from other systems. *This point is important for later.

amount of information where N=6 because the possible alternatives are six. Instead of “N” (number of alternatives), scientists measure information in terms of quantity deemed “S” after Shannon. Rovelli also states information is finite in nature based on quantum mechanical properties. New or “relevant” information cancels out “irrelevant” information in a physical system, therefore systems can always obtain new information from other systems. *This point is important for later.

Measuring Possible Alternatives

The fundamental unit of classical information is a “bit.” The natural unit of information, or “nat,” is a unit of information or entropy. Information entropy is the average rate information is produced by a random source of data. Information entropy can be measured in bits, nats, or decimal digits, depending on the base logarithm defining it. Once again, a binary digit, characterized as 0 and 1, represents information in classical computing. A quantum bit, or “qubit,” is the basic unit of quantum information in the quantum world. A qubit can be a coherent superposition of both 0 and 1 eigenstates according to quantum mechanical properties. A qubit can also hold more information than a classical bit. Lastly, probability amplitudes are complex numbers. They are the probability of a qubit to appear in its basis states.

Quantum Machine Learning through Quantum Information

Quantum ANNs potentially enable deep learning from large quantities of qubits. Qubits are information, so they measure possible alternatives. Quantum ANNs are like Bayesian networks graphically modelling probabilistic relationships in this specific interpretation. The quantum nature of these networks expand access to reciprocal or correlated information.

An interpretation of reciprocal information is discussed through quantum mechanical properties and quantum many-worlds, also called “multiverse” theory in the last part of this post. This specific interpretation is multiverses are finite because information is. This is loosely based on Steven Hawking and Thomas Hertog’s April 2018 article, A smooth exit from internal inflation? where they state, “eternal inflation does not produce an infinite fractal-like multiverse, but is finite and reasonably smooth.”

Quantum Many Worlds

Quantum computing has the potential to allow reversible or time-invertible deep learning and associative memory, based on quantum entanglement and superposition. Qubits contain entangled relevant and irrelevant (anti-correlated probabilities) information across space-time. This concept ensures a retro-causality loop of finite information exchange. Quantum associative memory is the ability to learn and remember correlations between seemingly unrelated items. This is possible because all “items” are correlated through quantum phenomena. Relevant information in one world or universe (macro possible alternative) is simultaneously irrelevant information in the adjacent world because of quantum states and finite quantity of information in nature. Quantum information cannot be copied according to the no-cloning theorem. Conversely, it cannot be deleted based on a time reversed dual called the “no-deleting theorem.”

Information is the quanta of consciousness. It is a measurement of awareness following all possible trajectories through the quantum multiverse ensuring the feedback loop of finite information that is reality.

This specific interpretation is based on Hugh Everett’s relative state or many-worlds interpretation (MWI) and information reality code concept. MWI states “all possible alternate histories and futures are real, each representing an actual world” or universe. The reality code behaves similarly to classical coding. Coding theory is the application of information theory manifesting efficient and reliable data transmission in a non-deterministic manner (where meaning is relative). Information in a data set is characterized by its Shannon entropy.

This specific interpretation is based on Hugh Everett’s relative state or many-worlds interpretation (MWI) and information reality code concept. MWI states “all possible alternate histories and futures are real, each representing an actual world” or universe. The reality code behaves similarly to classical coding. Coding theory is the application of information theory manifesting efficient and reliable data transmission in a non-deterministic manner (where meaning is relative). Information in a data set is characterized by its Shannon entropy.

Summary of Key Points (You made it!)

• The QAI ecosystem is underpinned by the scientific notion of information

• Information does not measure what is known, it measures the number of possible alternatives for something

• Relevant information cancels out irrelevant information in a physical system, therefore systems can always obtain new information from other systems

• Relevant information cancels out irrelevant information in a physical system, therefore systems can always obtain new information from other systems

• A qubit can be a coherent superposition of both 0 and 1 eigenstates, according to quantum mechanical properties

• Qubits contain entangled relevant and irrelevant information across the multiverse

• MWI states all possible alternate histories and futures are real, each representing an “actual world” or universe

• Multiverses are finite because information is

• Information is the quanta of consciousness and measurement of awareness

What’s Next?

One interpretation of AI is whoever becomes the leader in this sphere will become the ruler of the world. This is one possible alternative for QAI. Another possible alternative is the validation the many-worlds theory, providing insight into observable world alternate histories and optimized futures because information is available to QAI agent networks. The predictive nature of classical AI to support global superpower decision-making may not happen as planned either. Predictions in the observable world exist in other worlds, so AI predicting the observable future is relative. For example, when a dice lands on the number 1 in the observable world, it lands on the other five alternatives in alternate worlds. Additionally, unknown events in the observable world are known elsewhere in the quantum multiverse and

One interpretation of AI is whoever becomes the leader in this sphere will become the ruler of the world. This is one possible alternative for QAI. Another possible alternative is the validation the many-worlds theory, providing insight into observable world alternate histories and optimized futures because information is available to QAI agent networks. The predictive nature of classical AI to support global superpower decision-making may not happen as planned either. Predictions in the observable world exist in other worlds, so AI predicting the observable future is relative. For example, when a dice lands on the number 1 in the observable world, it lands on the other five alternatives in alternate worlds. Additionally, unknown events in the observable world are known elsewhere in the quantum multiverse and  vice versa (alternate histories and futures). Physicist David Deutsch, a proponent of the MWI, believes MWI will be testable through quantum computing. Based on this blog’s conjecture, developing a parallel QAI strategy is the first step in preparing for our changing understanding of the world.

vice versa (alternate histories and futures). Physicist David Deutsch, a proponent of the MWI, believes MWI will be testable through quantum computing. Based on this blog’s conjecture, developing a parallel QAI strategy is the first step in preparing for our changing understanding of the world.

If you enjoyed this mind-bending post, please see Mr. Morris’ previous guest blog posts:

And read Ms. Kania’s Quantum Surprise on the Battlefield?

Victor R. Morris is a civilian irregular warfare and threat mitigation instructor at the Joint Multinational Readiness Center (JMRC) in Germany.

Humans are susceptible to cognitive biases and these biases sometimes result in catastrophic outcomes, particularly in the high stress environment of war-time decision-making. Artificial Intelligence (AI) offers the possibility of mitigating the susceptibility of negative outcomes in the commander’s decision-making process by enhancing the collective Emotional Intelligence (EI) of the commander and his/her staff. AI will continue to become more prevalent in combat and as such, should be integrated in a way that advances

Humans are susceptible to cognitive biases and these biases sometimes result in catastrophic outcomes, particularly in the high stress environment of war-time decision-making. Artificial Intelligence (AI) offers the possibility of mitigating the susceptibility of negative outcomes in the commander’s decision-making process by enhancing the collective Emotional Intelligence (EI) of the commander and his/her staff. AI will continue to become more prevalent in combat and as such, should be integrated in a way that advances  the EI capacity of our commanders. An interactive AI that feels like one is communicating with a staff officer, which has human-compatible principles, can support decision-making in high-stakes, time-critical situations with ambiguous or incomplete information.

the EI capacity of our commanders. An interactive AI that feels like one is communicating with a staff officer, which has human-compatible principles, can support decision-making in high-stakes, time-critical situations with ambiguous or incomplete information. The mission command philosophy necessitates improved EI. EI is defined as the capacity to be aware of, control, and express one’s emotions, and to handle interpersonal relationships judiciously and empathetically, at much quicker speeds in order seize the initiative in war.

The mission command philosophy necessitates improved EI. EI is defined as the capacity to be aware of, control, and express one’s emotions, and to handle interpersonal relationships judiciously and empathetically, at much quicker speeds in order seize the initiative in war. The advent of machine-learning algorithms that could be applied to autonomous lethal weapons systems has so far resulted in a general predilection towards ensuring humans remain in the decision-making loop with respect to all aspects of warfare.

The advent of machine-learning algorithms that could be applied to autonomous lethal weapons systems has so far resulted in a general predilection towards ensuring humans remain in the decision-making loop with respect to all aspects of warfare. The Battalion is a good example organization to visualize this framework. A machine-learning software system could be connected into different staff systems to analyze data produced by the section as they execute their warfighting functions. This machine-learning software system would also assess the human-in-the-loop decisions against statistical outcomes and aggregate important data to support the commander’s

The Battalion is a good example organization to visualize this framework. A machine-learning software system could be connected into different staff systems to analyze data produced by the section as they execute their warfighting functions. This machine-learning software system would also assess the human-in-the-loop decisions against statistical outcomes and aggregate important data to support the commander’s  assessments. Over time, this EI-based machine-learning software system could rank the quality of the staff officers’ judgements. The commander can then consider the value of the staff officers’ assessments against the officers’ track-record of reliability and the raw data provided by the staff sections’ systems. The Bridgewater financial firm employs this very type of human decision-making assessment algorithm in order to assess the

assessments. Over time, this EI-based machine-learning software system could rank the quality of the staff officers’ judgements. The commander can then consider the value of the staff officers’ assessments against the officers’ track-record of reliability and the raw data provided by the staff sections’ systems. The Bridgewater financial firm employs this very type of human decision-making assessment algorithm in order to assess the  “believability” of their employees’ judgements before making high-stakes, and sometimes time-critical, international financial decisions.

“believability” of their employees’ judgements before making high-stakes, and sometimes time-critical, international financial decisions. Stuart Russell offers a construct of limitations that should be coded into AI in order to make it most useful to humanity and prevent conclusions that result in an AI turning on humanity. These three concepts are: 1) principle of altruism towards the human race (and not itself), 2) maximizing uncertainty by making it follow only human objectives, but not explaining what those are, and 3) making it learn by exposing it to everything and all types of humans.

Stuart Russell offers a construct of limitations that should be coded into AI in order to make it most useful to humanity and prevent conclusions that result in an AI turning on humanity. These three concepts are: 1) principle of altruism towards the human race (and not itself), 2) maximizing uncertainty by making it follow only human objectives, but not explaining what those are, and 3) making it learn by exposing it to everything and all types of humans. The potential opportunities and pitfalls are abundant for the employment of AI in decision-making. Apart from the obvious danger of this type of system being hacked, the possibility of the AI machine-learning algorithms harboring biased coding inconsistent with the values of the unit employing it are real.

The potential opportunities and pitfalls are abundant for the employment of AI in decision-making. Apart from the obvious danger of this type of system being hacked, the possibility of the AI machine-learning algorithms harboring biased coding inconsistent with the values of the unit employing it are real. The commander’s primary goal is to achieve the mission. The future includes AI, and commanders will need to trust and integrate AI assessments into their natural decision-making process and make it part of their intuitive calculus. In this way, they will have ready access to objective analyses of their units’ potential biases, enhancing their own EI, and be able overcome them to accomplish their mission.

The commander’s primary goal is to achieve the mission. The future includes AI, and commanders will need to trust and integrate AI assessments into their natural decision-making process and make it part of their intuitive calculus. In this way, they will have ready access to objective analyses of their units’ potential biases, enhancing their own EI, and be able overcome them to accomplish their mission.

This insightful book by economists Ajay Agrawal, Joshua Gans, and Avi Goldfarb penetrates the hype often associated with AI by describing its base functions and roles and providing the economic framework for its future applications. Of particular interest is their perspective of AI entities as prediction machines. In simplifying and de-mything our understanding of AI and Machine Learning (ML) as prediction tools, akin to computers being nothing more than extremely powerful mathematics machines, the authors effectively describe the economic impacts that these prediction machines will have in the future.

This insightful book by economists Ajay Agrawal, Joshua Gans, and Avi Goldfarb penetrates the hype often associated with AI by describing its base functions and roles and providing the economic framework for its future applications. Of particular interest is their perspective of AI entities as prediction machines. In simplifying and de-mything our understanding of AI and Machine Learning (ML) as prediction tools, akin to computers being nothing more than extremely powerful mathematics machines, the authors effectively describe the economic impacts that these prediction machines will have in the future. Training: This is the Big Data that trains the underlying AI algorithms in the first place. Generally, the bigger and most robust the data set is, the more effective the AI’s predictive capability will be. Activities such as driving (with millions of iterations every day) and online commerce (with similar large numbers of transactions) in defined environments lend themselves to efficient AI applications.

Training: This is the Big Data that trains the underlying AI algorithms in the first place. Generally, the bigger and most robust the data set is, the more effective the AI’s predictive capability will be. Activities such as driving (with millions of iterations every day) and online commerce (with similar large numbers of transactions) in defined environments lend themselves to efficient AI applications. Feedback: This data comes from either manual inputs by users and developers or from AI understanding what effects took place from its previous applications. While often overlooked, this data is critical to iteratively enhancing and refining the AI’s performance as well as identifying biases and askew decision-making. AI is not a static, one-off product; much like software, it must be continually updated, either through injects or learning.

Feedback: This data comes from either manual inputs by users and developers or from AI understanding what effects took place from its previous applications. While often overlooked, this data is critical to iteratively enhancing and refining the AI’s performance as well as identifying biases and askew decision-making. AI is not a static, one-off product; much like software, it must be continually updated, either through injects or learning. “… their expertise is confined to a single domain, as opposed to hypothetical future “general” AI systems that could apply expertise more broadly. Machines – at least for now – lack the general-purpose reasoning that humans use to flexibly perform a range of tasks: making coffee one minute, then taking a phone call from work, then putting on a toddler’s shoes and putting her in the car for school.” – from

“… their expertise is confined to a single domain, as opposed to hypothetical future “general” AI systems that could apply expertise more broadly. Machines – at least for now – lack the general-purpose reasoning that humans use to flexibly perform a range of tasks: making coffee one minute, then taking a phone call from work, then putting on a toddler’s shoes and putting her in the car for school.” – from

2. The U.S. military will not be able to just “throw AI on it” and achieve effective results. The effective application of AI will require a disciplined and comprehensive review of all warfighting functions to determine where AI can best augment and enhance our current Soldier-centric capabilities (i.e., identify those workflows and processes – Intelligence and Targeting Cycles – that can be enhanced with the application of AI). Leaders will also have to assess where AI can replace Soldiers in workflows and organizational architecture, and whether AI necessitates the discarding or major restructuring of either. Note that Goldman-Sachs is in the process of conducting this type of self-evaluation right now.

2. The U.S. military will not be able to just “throw AI on it” and achieve effective results. The effective application of AI will require a disciplined and comprehensive review of all warfighting functions to determine where AI can best augment and enhance our current Soldier-centric capabilities (i.e., identify those workflows and processes – Intelligence and Targeting Cycles – that can be enhanced with the application of AI). Leaders will also have to assess where AI can replace Soldiers in workflows and organizational architecture, and whether AI necessitates the discarding or major restructuring of either. Note that Goldman-Sachs is in the process of conducting this type of self-evaluation right now. 3. Due to its incredible “thirst” for Big Data, AI/ML will necessitate tradeoffs between security and privacy (the former likely being more important to the military) and quantity and quality of data.

3. Due to its incredible “thirst” for Big Data, AI/ML will necessitate tradeoffs between security and privacy (the former likely being more important to the military) and quantity and quality of data. 4. In the near to mid-term future, AI/ML will not replace Leaders, Soldiers, and Analysts, but will allow them to focus on the big issues (i.e., “the fight”) by freeing them from the resource-intensive (i.e., time and manpower) mundane and rote tasks of data crunching, possibly facilitating the reallocation of manpower to growing need areas in data management, machine training, and AI translation.

4. In the near to mid-term future, AI/ML will not replace Leaders, Soldiers, and Analysts, but will allow them to focus on the big issues (i.e., “the fight”) by freeing them from the resource-intensive (i.e., time and manpower) mundane and rote tasks of data crunching, possibly facilitating the reallocation of manpower to growing need areas in data management, machine training, and AI translation.

Realizing that algorithms supporting future Intelligence, Surveillance, and Reconnaissance (ISR) networks and Commander’s decision support aids will have inherent biases — what is the impact on future warfighting? This question is exceptionally relevant as Soldiers and Leaders consider the influence of biases in man-machine relationships, and their potential ramifications on the battlefield, especially with regard to the rules of engagement (i.e., mission execution and combat efficiency versus the proportional use of force and minimizing civilian casualties and collateral damage).

Realizing that algorithms supporting future Intelligence, Surveillance, and Reconnaissance (ISR) networks and Commander’s decision support aids will have inherent biases — what is the impact on future warfighting? This question is exceptionally relevant as Soldiers and Leaders consider the influence of biases in man-machine relationships, and their potential ramifications on the battlefield, especially with regard to the rules of engagement (i.e., mission execution and combat efficiency versus the proportional use of force and minimizing civilian casualties and collateral damage). The Mad Scientist Initiative has developed a series of questions to help frame the discussion regarding what biases we are willing to accept and in what cases they will be acceptable. Feel free to share your observations and questions in the comments section of this blog post (below) or email them to us at: usarmy.jble.tradoc.mbx.army-mad-scientist@mail.mil.

The Mad Scientist Initiative has developed a series of questions to help frame the discussion regarding what biases we are willing to accept and in what cases they will be acceptable. Feel free to share your observations and questions in the comments section of this blog post (below) or email them to us at: usarmy.jble.tradoc.mbx.army-mad-scientist@mail.mil. 2) In what types of systems will we accept biases? Will machine learning applications in supposedly non-lethal warfighting functions like sustainment, protection, and intelligence be given more leeway with regards to bias?

2) In what types of systems will we accept biases? Will machine learning applications in supposedly non-lethal warfighting functions like sustainment, protection, and intelligence be given more leeway with regards to bias? 4) At what point will the pace of innovation and introduction of this technology on the battlefield by our adversaries cause us to forego concerns of bias and rapidly field systems to gain a decisive Observe, Orient, Decide, and Act (OODA) loop and combat speed advantage on the

4) At what point will the pace of innovation and introduction of this technology on the battlefield by our adversaries cause us to forego concerns of bias and rapidly field systems to gain a decisive Observe, Orient, Decide, and Act (OODA) loop and combat speed advantage on the