[Editor’s Note: Mad Scientist Laboratory is pleased to present the first of two guest blog posts by Dr. Nir Buras. In today’s post, he makes the compelling case for the establishment of man-machine rules. Given the vast technological leaps we’ve made during the past two centuries (with associated societal disruptions), and the potential game changing technological innovations predicted through the middle of this century, we would do well to consider Dr. Buras’ recommended list of nine rules — developed for applicability to all technologies, from humankind’s first Paleolithic hand axe to the future’s much predicted, but long-awaited strong Artificial Intelligence (AI).]

Two hundred years of massive collateral impacts by technology have brought to the forefront of society’s consciousness the idea that some sort of rules for man-machine interaction are necessary, similar to the rules in place for gun safety, nuclear power, and biological agents. But where their physical effects are clear to see, the power of computing is veiled in virtuality and anthropomorphization. It appears harmless, if not familiar, and it often has a virtuous appearance.

Computing originated in the punched cards of Jacquard looms early in the 19th century. Today it carries the promise of a cloud of electrons from which we make our Emperor’s New Clothes. As far back as 1842, the brilliant mathematician Ada Augusta, Countess of Lovelace (1815-1852), foresaw the potential of computers. A protégé and associate of Charles Babbage (1791-1871), conceptual originator of the programmable digital computer, she realized the “almost incalculable” ultimate potential of such difference engines. She also recognized that, as in all extensions of human power or knowledge, “collateral influences” occur.1

AI presents us with such “collateral influences.”2 The question is not whether machine systems can mimic human abilities and nature, but when. Will the world become dependent on ungoverned algorithms?3 Should there be limits to mankind’s connection to machines? As concerns mount, well-meaning politicians, government officials, and some in the field are trying to forge ethical guidelines to address the collateral challenges of data use, robotics, and AI.4

A Hippocratic Oath of AI?

Asimov’s Three Laws of Robotics are merely a literary ploy to infuse his storylines.5 In the real world, Apple, Amazon, Facebook, Google, DeepMind, IBM, and Microsoft, founded www.partnershiponai.org6 to ensure “… the safety and trustworthiness of AI technologies, the fairness and transparency of systems.” Data scientists from tech companies, governments, and nonprofits gathered to draft a voluntary digital charter for their profession.7 Oren Etzioni, CEO of the Allen Institute for AI and a professor at the University of Washington’s Computer Science Department, proposed a Hippocratic Oath for AI.

But such codes are composed of hard-to-enforce terms and vague goals, such as using AI “responsibly and ethically, with the aim of reducing bias and discrimination.” They pay lip service to privacy and human priority over machines. They appear to sugarcoat a culture which passes the buck to the lowliest Soldier.8

We know that good intentions are inadequate when enforcing confidentiality. Well-meant but unenforceable ideas don’t meet business standards. It is unlikely that techies and their bosses, caught up in the magic of coding, will shepherd society through the challenges of the petabyte AI world.9 Vague principles, underwriting a non-binding code, cannot counter the cynical drive for profit.10

Indeed, in an area that lacks authorities or legislation to enforce rules, the Association for Computing Machinery (ACM) is itself backpedaling from its own Code of Ethics and Professional Conduct. Their document weakly defines notions of “public good” and “prioritizing the least advantaged.”11 Microsoft’s President Brad Smith admits that his company wouldn’t expect customers of its services to meet even these standards.

In the wake of the Cambridge Analytica scandal, it is clear that coders are not morally superior to other people and that voluntary, unenforceable Codes and Oaths are inadequate.12 Programming and algorithms clearly reflect ethical, philosophical, and moral positions.13 It is false to assume that the so-called “openness” trait of programmers reflects a broad mindfulness. There is nothing heroic about “disruption for disruption’s sake” or hiding behind “black box computing.”14 The future cannot be left up to an adolescent-centric culture in an economic system that rests on greed.15 The society that adopts “Electronic personhood” deserves it.

In the wake of the Cambridge Analytica scandal, it is clear that coders are not morally superior to other people and that voluntary, unenforceable Codes and Oaths are inadequate.12 Programming and algorithms clearly reflect ethical, philosophical, and moral positions.13 It is false to assume that the so-called “openness” trait of programmers reflects a broad mindfulness. There is nothing heroic about “disruption for disruption’s sake” or hiding behind “black box computing.”14 The future cannot be left up to an adolescent-centric culture in an economic system that rests on greed.15 The society that adopts “Electronic personhood” deserves it.

Machines are Machines, People are People

After 200 years of the technology tail wagging the humanity dog, it is apparent now that we are replaying history – and don’t know it. Most human cultures have been intensively engaged with technology since before the Iron Age 3,000 years ago. We have been keenly aware of technology’s collateral effects mostly since the Industrial Revolution, but have not yet created general rules for how we want machines to impact individuals and society. The blurring of reality and virtuality that AI brings to the table might prompt us to do so.

After 200 years of the technology tail wagging the humanity dog, it is apparent now that we are replaying history – and don’t know it. Most human cultures have been intensively engaged with technology since before the Iron Age 3,000 years ago. We have been keenly aware of technology’s collateral effects mostly since the Industrial Revolution, but have not yet created general rules for how we want machines to impact individuals and society. The blurring of reality and virtuality that AI brings to the table might prompt us to do so.

Distinctions between the real and the virtual must be maintained if the behavior of the most sophisticated computation machines and robots is captured by legal systems. Nothing in the virtual world should be considered real any more than we believe that the hallucinations of a drunk or drugged person are real.

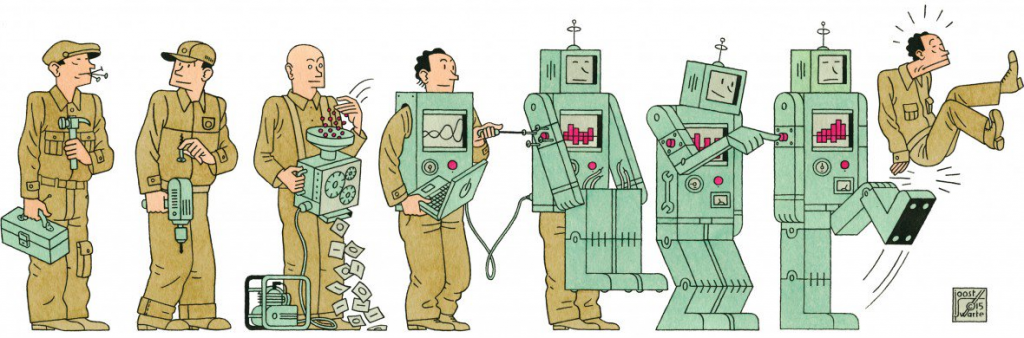

The simplest way to maintain the distinction is remembering that the real IS, and the virtual ISN’T, and that virtual mimesis is produced by machines. Lovelace reminded us that machines are just machines. While in a dark, distant future, giving machines personhood might lead to the collapse of humanity, Harari’s Homo Deus warns us that AI, robotics, and automation are quickly bringing the economic value of humans to zero.16

From the start of civilization, tools and machines have been used to reduce human drudge labor and increase production efficiency. But while tools and machines obviate physical aspects of human work in the context of the production of goods or processing information, they in no way affect the truth of humans as sentient and emotional living beings, nor the value of transactions among them.

The man-machine line is further blurred by our anthropomorphizing machinery, computing, and programming. We speak of machines in terms of human traits, and make programming analogous to human behavior. But there is nothing amusing about GIGO experiments like MIT’s psychotic bot Norman, or Microsoft’s fascist Tay.17 Technologists falling into the trap of considering that AI systems can make decisions, are analogous to children, playing with dolls, marveling that “their dolly is speaking.”

Machines don’t make decisions. Humans do. They may accept suggestions made by machines and when they do, they are responsible for the decisions made. People are and must be held accountable, especially those hiding behind machines. The holocaust taught us that one can never say, “I was just following orders.”

Nothing less than enforceable operational rules is required for any technical activity, including programming. It is especially important for tech companies, since evidence suggests that they take ethical questions to heart only under direct threats to their balance sheets.18

When virtuality offers experiences that humans perceive as real, the outcomes are the responsibility of the creators and distributors, no less than tobacco companies selling cigarettes, or pharmaceutical companies and cartels selling addictive drugs. Individuals do not have the right to risk the well-being of others to satisfy their need for complying with clichés such as “innovation,” and “disruption.”

When virtuality offers experiences that humans perceive as real, the outcomes are the responsibility of the creators and distributors, no less than tobacco companies selling cigarettes, or pharmaceutical companies and cartels selling addictive drugs. Individuals do not have the right to risk the well-being of others to satisfy their need for complying with clichés such as “innovation,” and “disruption.”

Nuclear, chemical, biological, gun, aviation, machine, and automobile safety rules do not rely on human nature. They are based on technical rules and procedures. They are enforceable and moral responsibility is typically carried by the hierarchies of their organizations.19

As we master artificial intelligence, human intelligence must take charge.20 The highest values known to mankind remains human life and the qualities and quantities necessary for the best individual life experience.21 For the transactions and transformations in which technology assists, we need simple operational rules to regulate the actions and manners of individuals. Moving the focus to human interactions empowers individuals and society.

Man-Machine Rules

Man-Machine rules should address any tool or machine ever made or to be made. They would be equally applicable to any technology of any period, from the first flaked stone, to the ultimate predictive “emotion machines.” They would be adjudicated by common law.22

1. All material transformations and human transactions are to be conducted by humans.

1. All material transformations and human transactions are to be conducted by humans.

2. Humans may directly employ hand/desktop/workstation devices in the above.

3. At all times, an individual human is responsible for the activity of any machine or program.

4. Responsibility for errors, omissions, negligence, mischief, or criminal-like activity is shared by every person in the organizational hierarchical chain, from the lowliest coder or operator, to the CEO of the organization, and its last shareholder.

5. Any person can shut off any machine at any time.

6. All computing is visible to anyone [No Black Box].

7. Personal Data are things. They belong to the individual who owns them, and any use of them by a third-party requires permission and compensation.

8. Technology must age before common use, until an Appropriate Technology is selected.

9. Disputes must be adjudicated according to Common Law.

Machines are here to help and advise humans, not replace them, and humans may exhibit a spectrum of responses to them. Some may ignore a robot’s advice and put others at risk. Some may follow recommendations to the point of becoming a zombie. But either way, Man-Machine Rules are based on and meant to support free, individual human choices.

Machines are here to help and advise humans, not replace them, and humans may exhibit a spectrum of responses to them. Some may ignore a robot’s advice and put others at risk. Some may follow recommendations to the point of becoming a zombie. But either way, Man-Machine Rules are based on and meant to support free, individual human choices.

Man-Machine Rules can help organize dialog around questions such as how to secure personal data. Do we need hardcopy and analog formats? How ethical are chips embedded in people and in their belongings? What degrees and controls are contemplatable for personal freedoms and personal risk? Will consumer rights and government organizations audit algorithms?23 Would equipment sabbaticals be enacted for societal and economic balances?

The idea that we can fix the tech world through a voluntary ethical code emergent from itself, paradoxically expects that the people who created the problems will fix them.24 It is not whether the focus should shift to human interactions that leaves more humans in touch with their destiny. The question is at what cost? If not now, when? If not by us, by whom?

If you reading enjoyed this post, please also see:

Prediction Machines: The Simple Economics of Artificial Intelligence

Artificial Intelligence (AI) Trends

Making the Future More Personal: The Oft-Forgotten Human Driver in Future’s Analysis

Nir Buras is a PhD architect and planner with over 30 years of in-depth experience in strategic planning, architecture, and transportation design, as well as teaching and lecturing. His planning, design and construction experience includes East Side Access at Grand Central Terminal, New York; International Terminal D, Dallas-Fort-Worth; the Washington DC Dulles Metro line; work on the US Capitol and the Senate and House Office Buildings in Washington. Projects he has worked on have been published in the New York Times, the Washington Post, local newspapers, and trade magazines. Buras, whose original degree was Architect and Town planner, learned his first lesson in urbanism while planning military bases in the Negev Desert in Israel. Engaged in numerous projects since then, Buras has watched first-hand how urban planning impacted architecture. After the last decade of applying in practice the classical method that Buras learned in post-doctoral studies, his book, *The Art of Classic Planning* (Harvard University Press, 2019), presents the urban design and planning method of Classic Planning as a path forward for homeostatic, durable urbanism.

1 Lovelace, Ada Augusta, Countess, Sketch of The Analytical Engine Invented by Charles Babbage by L. F. Menabrea of Turin, Officer of the Military Engineers, With notes upon the Memoir by the Translator, Bibliothèque Universelle de Genève, October, 1842, No. 82.

2 Oliveira, Arlindo, in Pereira, Vitor, Hippocratic Oath for Algorithms and Artificial Intelligence, Medium.com (website), 23 August 2018, https://medium.com/predict/hippocratic-oath-for-algorithms-and-artificial-intelligence-5836e14fb540; Middleton, Chris, Make AI developers sign Hippocratic Oath, urges ethics report: Industry backs RSA/YouGov report urging the development of ethical robotics and AI, computing.co.uk (website), 22 September 2017, https://www.computing.co.uk/ctg/news/3017891/make-ai-developers-sign-a-hippocratic-oath-urges-ethics-report; N.A., Do AI programmers need a Hippocratic oath?, Techhq.com (website), 15 August 2018, https://techhq.com/2018/08/do-ai-programmers-need-a-hippocratic-oath/

3 Oliveira, 2018; Dellot, Benedict, A Hippocratic Oath for AI Developers? It May Only Be a Matter of Time, Thersa.org (website), 13 February 2017, https://www.thersa.org/discover/publications-and-articles/rsa-blogs/2017/02/a-hippocratic-oath-for-ai-developers-it-may-only-be-a-matter-of-time; See also: Clifford, Catherine, Expert says graduates in A.I. should take oath: ‘I must not play at God nor let my technology do so’, Cnbc.com (website), 14 March 2018, https://www.cnbc.com/2018/03/14/allen-institute-ceo-says-a-i-graduates-should-take-oath.html; Johnson, Khari, AI Weekly: For the sake of us all, AI practitioners need a Hippocratic oath, Venturebeat.com (website), 23 March 2018, https://venturebeat.com/2018/03/23/ai-weekly-for-the-sake-of-us-all-ai-practitioners-need-a-hippocratic-oath/; Work, Robert O., former deputy secretary of defense, in Metz, Cade, Pentagon Wants Silicon Valley’s Help on A.I., New York Times, 15 March 2018.

4 Schotz, Mai, Should Data Scientists Adhere To A Hippocratic Oath?, Wired.com (website), 8 February 2018, https://www.wired.com/story/should-data-scientists-adhere-to-a-hippocratic-oath/; du Preez, Derek, MPs debate ‘hippocratic oath’ for those working with AI, Government.diginomica.com (website), 19 January 2018, https://government.diginomica.com/2018/01/19/mps-debate-hippocratic-oath-working-ai/

5 1. A robot may not injure a human being or, through inaction, allow a human being to come to harm. 2. A robot must obey the orders given it by human beings except where such orders would conflict with the First Law. 3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws. Asimov, Isaac, Runaround, in I, Robot, The Isaac Asimov Collection ed., Doubleday, New York City, p. 40.

6 Middleton, 2017.

7 Etzioni, Oren, A Hippocratic Oath for artificial intelligence practitioners, Techcrunch.com (website), 14 March 2018. https://techcrunch.com/2018/03/14/a-hippocratic-oath-for-artificial-intelligence-practitioners/?platform=hootsuite

8 Do AI programmers need a Hippocratic oath?, Techhq, 2018.

9 Goodsmith, Dave, quoted in Schotz, 2018.

10 Schotz, 2018.

11 Do AI programmers need a Hippocratic oath?, Techhq, 2018. Wheeler, Schaun, in Schotz, 2018.

12 Gnambs, T., What makes a computer wiz? Linking personality traits and programming aptitude, Journal of Research in Personality, 58, 2015, pp. 31-34.

13 Oliveira, 2018.

14 Jarrett, Christian, The surprising truth about which personality traits do and don’t correlate with computer programming skills, Digest.bps.org.uk (website), British Psychological Society, 26 October 2015, https://digest.bps.org.uk/2015/10/26/the-surprising-truth-about-which-personality-traits-do-and-dont-correlate-with-computer-programming-skills/; Johnson, 2018.

15 Do AI programmers need a Hippocratic oath?, Techhq, 2018.

16 Harari, Yuval N. Homo Deus: A Brief History of Tomorrow. London: Harvill Secker, 2015.

17 That Norman suffered from extended exposure to the darkest corners of Reddit, and represents a case study on the dangers of artificial intelligence gone wrong when biased data is used in machine learning algorithms is not an excuse. AI Twitter bot, Tay had to be deleted after it started making sexual references and declarations such as “Hitler did nothing wrong.”

18 Schotz, 2018.

19 See the example of Dr. Kerstin Dautenhahn, Research Professor of Artificial Intelligence in the School of Computer Science at the University of Hertfordshire, who claims no responsibility in determining the application of the work she creates. She might as well be feeding children shards of glass saying, “It is their choice to eat it or not.” In Middleton, 2017. The principle is that the risk of an unfavorable outcome lies with an individual as well as the entire chain of command, direction, and or ownership of their organization, including shareholders of public companies and citizens of states. Everybody has responsibility the moment they engage in anything that could affect others. Regulatory “sandboxes” for AI developer experiments – equivalent to pathogen or nuclear labs – should have the same types of controls and restrictions. Dellot, 2017.

20 Oliveira, 2018.

21 Sentience and sensibilities of other beings is recognized here, but not addressed.

22 The proposed rules may be appended to the International Covenant on Economic, Social and Cultural Rights (ICESCR, 1976), part of the International Bill of Human Rights, which include the Universal Declaration of Human Rights (UDHR) and the International Covenant on Civil and Political Rights (ICCPR). International Covenant on Economic, Social and Cultural Rights, www.refworld.org.; EISIL International Covenant on Economic, Social and Cultural Rights, www.eisil.org; UN Treaty Collection: International Covenant on Economic, Social and Cultural Rights, UN. 3 January 1976; Fact Sheet No.2 (Rev.1), The International Bill of Human Rights, UN OHCHR. June 1996.

23 Dellot, 2017.

24 Schotz, 2018.

Really well done post and posited Man-Machine rules (Asimov’s literary plot devices work great within the worlds he constructed and only there).

The identified Rule 3 about responsibility would send some ripples out not just from a rule making aspect but from a lawfare and quantifiable aspect to the Commander; specific to armed UAS and potentially be able to answer the questions often asked “who is responsible when an armed drone takes out civilians?” The answer seems obvious but, to date, there is still serious discussion on that (to me it’s easy, armed UAS is a tool in the commander’s tool bag for his/her use and first rule of leadership = everything is your fault).

This would also truncate (or not) the efforts of Academia to develop ethics “chips” and software for armed UAS (so called autonomous killing machines which is tech that doesn’t exist) so the UAS could make it’s own decisions on whether to fire or not (which flies in the face of stated DOD policy that robots will not, in the foreseeable future, be able to conduct kills and that is strictly limited to humans) because, as the thought pattern goes “if more robots are used then mankind will go to war more often because it will be easier with no humans being killed.”

https://www.schneier.com/blog/archives/2008/01/ethics_of_auton.html

Re Rule 3 and the question of “who is responsible when an armed drone takes out civilians?” As Clausewitz said, “War is the continuation of politics by other means.” Civilians caught in the fray unwillingly is a problem when facing guerrillas/terrorism. If the population sides with terrorists, the terrorists act as their military arm and the civilians are responsible for their choice.

If not, let us note that terrorists, and guerrillas, who often use terrorist tactics, bring with them a form of communal punishment because they do not represent the whole. Civilians caught in the activity are fundamentally either victims of the terrorists or their unknowing hostages / human shields.

To work around that, a military has to know more about the enemy than they know themselves. A smart military will avoid unwinnable conflicts. Communities must be encouraged to disengage from terrorists bullying them in their midst and isolate them. They must choose to dissociate from the extremists among them so that tiny minorities do not have the opportunity to impact the whole negatively. The internet (social media) is a good, but not sole, tool for building good will and good sense, if not lasting loyalties prior to engagement.

What we learned from 9-11 and what came after is that Western education (the perpetrators were university educated) is not enough. We have to dig deep inside and rediscover what we stand for. It can’t be a statement of imposing democracy or liberating people alone. We have to dig deep down culturally to reconnect with the core values we cherish as a culture and society.

The technological shifts of the last 200 years have forced us “to a wall” where we have to reach a higher level of understanding if we are to survive as a culture, if not a species. Given that by the end of the century 90% of people will be living in cities, we might see a reversion to a global city-state political structure, with military engagement less in the centers (see blog on Megacity Strategy) than in the supporting hinterland, where the military’s role will once more be the control of territory, not people.

The history of armaments and defenses has a pattern of leap-frogging. We know that whatever “invincible” technology we have at hand today will be matched and then overcome tomorrow. Completely mechanized warfare may be replaced by diplomacy in many cases, but chances are that not all. Chances are that things will be much more fragmented than they are today, for better and worse.

We are definitely repeating history, and the place to learn from is the Iron Age *city states* of 1000 BCE. Based on long-term results, we will know whether we chose wisely. A hint: “There is nothing to fear but fear itself”.

I this context, the epilogue to the book *Classic Planning* (Harvard University Press, 2019) discusses the general *three futurist scenarios*, hi-tech, feral, and muddling through.