[Editor’s Note: Mad Scientist Laboratory is pleased to present the following guest blog post by Dr. Jason R. Dorvee, Mr. Richard G. Kidd IV, and Mr. John R. Thompson. The Army of the future will need installations that will enable strategic support areas critical to Multi-Domain Operations (MDO). There are 156 installations that serve as the initial platform of maneuver for Army readiness. Due to increasing connectivity of military bases (and the Soldiers, Airmen, Marines, Sailors, and Civilians who live and work there) to the Internet of Things (IoT), DoD and Army installations will not be the sanctuaries they once were. These threats are further discussed in Mr. Kidd’s AUSA article last December, entitled “Threats to Posts: Army Must Rethink Base Security.” The following story posits the resulting “what if,” should the Army fail to address installation resilience (to include Soldiers, their families, and surrounding communities) when modernizing the overall force to face Twenty-first Century threats.]

“Army Installations are no longer sanctuaries” — Mr. Richard G. Kidd IV, Deputy Assistant Secretary of the Army (Installations, Energy and Environment), Strategic Integration

Why the most powerful Army the world had ever seen… never showed up to the fight.

The adversary, recognizing that they could not defeat the U.S. Army in a straight-up land fight, kept the Army out of the fight by creating hundreds of friction points around Army installations that disrupted, delayed, and ultimately prevented the timely application of combat power.

The year was 2030. New weapons, doctrine, training, and individual readiness came together to make the US Army the most capable land force in the world. Fully prepared, the Army was ready to fight and win in the complex environments of multi domain operations. The Army Futures Command generated a series of innovations empowering the Army to overcome the lethargy and distractions of protracted counter-insurgency warfare.

The year was 2030. New weapons, doctrine, training, and individual readiness came together to make the US Army the most capable land force in the world. Fully prepared, the Army was ready to fight and win in the complex environments of multi domain operations. The Army Futures Command generated a series of innovations empowering the Army to overcome the lethargy and distractions of protracted counter-insurgency warfare.

New equipment gave the Army technical and operational overmatch against all strategic competitors, rogue states, and emerging threats.

With virtualized synthetic training environments, the Army—active duty, Reserve, and National Guard— achieved a continuous, high-level state of unit readiness. The Army’s Soldiers achieved personalized elite-level fitness following tailored diet and physical fitness training regimens. No adversary stood a chance… after the Army arrived.

In the years leading up to 2030, the U.S. Army enjoyed the status of being the world’s most powerful land force. The United States’ national security was squarely centered on deterrence with diplomatic advantage deriving from military superiority. It was a somewhat surprising curiosity when this superiority was challenged by a land invasion of an allied state in the middle of Eurasia. This would not be the only surprise experienced by the Army.

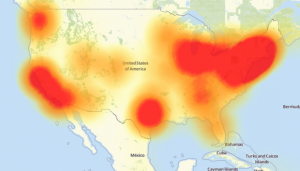

The overseas contest unfolded along a fairly predictable pattern, one that was anticipated in multiple war games and exercises. A near-peer competitor engaged in a hybrid of operations against a partner nation. They first acted to destabilize the country, and then, within the confusion created, they invaded. In response to the partner’s request for assistance, the U.S authorized mobilization and deployment of active and reserve component forces to counter the invasion. The mission was straightforward: retake lost ground, expel the adversary, and restore local government control. This was a task the Army had trained for and was more than capable of successfully executing. It just had to get there. While the partner nation struggled with an actual invasion, a different struggle was taking place in the U.S. homeland. The adversary combined a series of relatively minor cyber, information, and physical disruptions, which taken together, overwhelmed the Army Enterprise. Each act focused on clogging individual systems or processes needed to execute the mobilization and deployment functions.

The overseas contest unfolded along a fairly predictable pattern, one that was anticipated in multiple war games and exercises. A near-peer competitor engaged in a hybrid of operations against a partner nation. They first acted to destabilize the country, and then, within the confusion created, they invaded. In response to the partner’s request for assistance, the U.S authorized mobilization and deployment of active and reserve component forces to counter the invasion. The mission was straightforward: retake lost ground, expel the adversary, and restore local government control. This was a task the Army had trained for and was more than capable of successfully executing. It just had to get there. While the partner nation struggled with an actual invasion, a different struggle was taking place in the U.S. homeland. The adversary combined a series of relatively minor cyber, information, and physical disruptions, which taken together, overwhelmed the Army Enterprise. Each act focused on clogging individual systems or processes needed to execute the mobilization and deployment functions.

Cyber-mercenaries, paid in cryptocurrency, attacked the information environment and undermined the communication mechanisms essential for mobilizing the Army. Building on earlier trials in Korea and Europe, a range of false orders were sent to units and individuals. These false orders focused on early entry forces and reserve units needed to open ports and railheads in the United States. Compounding the situation, misleading information was simultaneously placed on social media and the news that indicated the mobilization had been cancelled. These efforts created so much uncertainty in the minds of individual Soldiers over their place of duty, initial musters for key reserve component units ran at less than 40% strength. Days were added to mobilization timelines as it took time for accurate information to be disseminated and formations to build to full strength.

Cyber-mercenaries, paid in cryptocurrency, attacked the information environment and undermined the communication mechanisms essential for mobilizing the Army. Building on earlier trials in Korea and Europe, a range of false orders were sent to units and individuals. These false orders focused on early entry forces and reserve units needed to open ports and railheads in the United States. Compounding the situation, misleading information was simultaneously placed on social media and the news that indicated the mobilization had been cancelled. These efforts created so much uncertainty in the minds of individual Soldiers over their place of duty, initial musters for key reserve component units ran at less than 40% strength. Days were added to mobilization timelines as it took time for accurate information to be disseminated and formations to build to full strength.

Focused cyber attention was given to individuals with critical enabling jobs – not just commanders or senior NCOs – but those with access to arms rooms and motor pools. Long-standing efforts to collect PII from these individuals allowed the adversary to compromise credit scores, alter social media presence, and target family members. Soldiers with mission-related demands already on their hands, now found themselves unable to use their credit cards, fuel their vehicles, or operate their cell phones. Instead of focusing on getting troops to the battle, they were caught in an array of false social media messages about themselves or their loved ones. Sorting fact from fiction

Focused cyber attention was given to individuals with critical enabling jobs – not just commanders or senior NCOs – but those with access to arms rooms and motor pools. Long-standing efforts to collect PII from these individuals allowed the adversary to compromise credit scores, alter social media presence, and target family members. Soldiers with mission-related demands already on their hands, now found themselves unable to use their credit cards, fuel their vehicles, or operate their cell phones. Instead of focusing on getting troops to the battle, they were caught in an array of false social media messages about themselves or their loved ones. Sorting fact from fiction  and regaining their financial functionality competed for their time and attention. Time was lost as Soldiers were distracted and overwhelmed. Arms rooms remained locked, access to the motor pool was delayed, and deployments were disrupted.

and regaining their financial functionality competed for their time and attention. Time was lost as Soldiers were distracted and overwhelmed. Arms rooms remained locked, access to the motor pool was delayed, and deployments were disrupted.

The communities surrounding Army installations also came under attack. Systems below the threshold of “critical,” such as street lights, traffic lights, and railroad crossings, were all locked in the “off” position, making road travel hazardous. The dispatch systems of key civilian first-responders were overwhelmed with misleading calls reporting false accidents, overwhelming response mechanisms and diverting or delaying much needed assistance. Soldiers were prevented from getting to their duty stations or transitioning quickly from affected communities. In parallel, an information warfare campaign was waged with the aim of undermining trust between civilian and military personnel. False narratives about spills of hazardous military materials and soldiers being contaminated by exposure to diseases created by malfunctioning vaccines added to the chaos.

The communities surrounding Army installations also came under attack. Systems below the threshold of “critical,” such as street lights, traffic lights, and railroad crossings, were all locked in the “off” position, making road travel hazardous. The dispatch systems of key civilian first-responders were overwhelmed with misleading calls reporting false accidents, overwhelming response mechanisms and diverting or delaying much needed assistance. Soldiers were prevented from getting to their duty stations or transitioning quickly from affected communities. In parallel, an information warfare campaign was waged with the aim of undermining trust between civilian and military personnel. False narratives about spills of hazardous military materials and soldiers being contaminated by exposure to diseases created by malfunctioning vaccines added to the chaos.

Key utility, water, and energy control systems on or adjacent to Army installations, understandably a “hard” target from the cyber context, were of such importance that they came under near constant attack across all their operations from transmission to customer billing. Only those few installations that had invested in advanced micro-grids, on-site power generation, and storage were able to maintain coherent operations beyond 72 hours. For most installations, backup generators that worked singularly when the maintenance teams were present for annual servicing, cascaded into collective failure when they all operated at once. For the Army, only the 10th and 24th Infantry Divisions were able to deploy, thanks to onsite energy resilience.

Key utility, water, and energy control systems on or adjacent to Army installations, understandably a “hard” target from the cyber context, were of such importance that they came under near constant attack across all their operations from transmission to customer billing. Only those few installations that had invested in advanced micro-grids, on-site power generation, and storage were able to maintain coherent operations beyond 72 hours. For most installations, backup generators that worked singularly when the maintenance teams were present for annual servicing, cascaded into collective failure when they all operated at once. For the Army, only the 10th and 24th Infantry Divisions were able to deploy, thanks to onsite energy resilience.

Small, but significant physical attacks occurred as well. Standard shipping containers, pieces of luggage, and Amazon Prime boxes were “weaponized” as drone transports, with their cargo activated on command. In the key out-loading ports of Savannah and Galveston, shore cranes were disabled by homemade thermite charges placed on gears and cables by such drones using photo recognition and artificial intelligence. Repairing and verifying the safety of these cranes

Small, but significant physical attacks occurred as well. Standard shipping containers, pieces of luggage, and Amazon Prime boxes were “weaponized” as drone transports, with their cargo activated on command. In the key out-loading ports of Savannah and Galveston, shore cranes were disabled by homemade thermite charges placed on gears and cables by such drones using photo recognition and artificial intelligence. Repairing and verifying the safety of these cranes added days to timelines and disrupted shipping schedules. Other drones deployed, having been “trained” with thousands of photo’s to fly into the air intakes of jet engines, either military or civilian. Only two downed airliners and a few near misses were sufficient to shut down air transportation across the country and initiate a multi-month inspection of all truck stops, docks, airports, and rail yards trying to find the “last” possible container. Perhaps the most effective drone attacks occurred when such drones dispersed chemical agents in municipal water supplies for those communities adjacent to installations or along lines of communication. The effects of these later attacks were compounded by shrewd information warfare operations to generate mass panic.

added days to timelines and disrupted shipping schedules. Other drones deployed, having been “trained” with thousands of photo’s to fly into the air intakes of jet engines, either military or civilian. Only two downed airliners and a few near misses were sufficient to shut down air transportation across the country and initiate a multi-month inspection of all truck stops, docks, airports, and rail yards trying to find the “last” possible container. Perhaps the most effective drone attacks occurred when such drones dispersed chemical agents in municipal water supplies for those communities adjacent to installations or along lines of communication. The effects of these later attacks were compounded by shrewd information warfare operations to generate mass panic.  Roads were clogged with evacuees, out-loading operations were curtailed, and key military assets that should have been supporting the deployment were diverted to provide support to civil authorities.

Roads were clogged with evacuees, out-loading operations were curtailed, and key military assets that should have been supporting the deployment were diverted to provide support to civil authorities.

Cumulatively, these cyber, informational, social, and physical attacks within the homeland and across Army installations and formations took their toll. Every step in the deployment and mobilization processes was disrupted and delayed as individuals and units had to work through the fog of friction, confusion, and hysteria that was generated.  The Army was gradually overwhelmed and immobilized. In the end, the war for the partner country in Eurasia was lost. The adversary’s attacks on the homeland had given it sufficient time to complete all of its military objectives. The most lethal Army in history was “stuck,” unable to arrive in time. US command authorities now faced a much more difficult military problem and the dilemma of choosing between all out war, or accepting a limited defeat.

The Army was gradually overwhelmed and immobilized. In the end, the war for the partner country in Eurasia was lost. The adversary’s attacks on the homeland had given it sufficient time to complete all of its military objectives. The most lethal Army in history was “stuck,” unable to arrive in time. US command authorities now faced a much more difficult military problem and the dilemma of choosing between all out war, or accepting a limited defeat.

There’s a saying from the Northeastern United States about infrastructure. It refers to the tangled mess of roads and paths in New England, specifically Maine. Spoken in the Mainer or “Mainah” accent, it goes:

“You cahn’t ghet thah from hehah.”

That was the US Army in 2030. Ignoring its infrastructure and its vulnerabilities at home, it got caught in a Mainah Scenario. This was a classic “Pink Flamingo;” the US Army knew its homeland operations were a vulnerability, but it failed to prepare.

That was the US Army in 2030. Ignoring its infrastructure and its vulnerabilities at home, it got caught in a Mainah Scenario. This was a classic “Pink Flamingo;” the US Army knew its homeland operations were a vulnerability, but it failed to prepare.

There were some attempts to recognize the potential problem:

– The National Defense Strategy of 2018 laid out the following:

It is now undeniable that the homeland is no longer a sanctuary. America is a target, whether from terrorists seeking to attack our citizens; malicious cyber activity against personal, commercial, or government infrastructure; or political and information subversion. New threats to commercial and military uses of space are emerging, while increasing digital connectivity of all aspects of life, business, government, and military creates significant vulnerabilities. During conflict, attacks against our critical defense, government, and economic infrastructure must be anticipated.

It is now undeniable that the homeland is no longer a sanctuary. America is a target, whether from terrorists seeking to attack our citizens; malicious cyber activity against personal, commercial, or government infrastructure; or political and information subversion. New threats to commercial and military uses of space are emerging, while increasing digital connectivity of all aspects of life, business, government, and military creates significant vulnerabilities. During conflict, attacks against our critical defense, government, and economic infrastructure must be anticipated.

– Even earlier (in 2015), The Army’s Energy Security and Sustainability Strategy clearly stated with respect to Army installations:

We will seek to use multi-fuel platforms and infrastructure that can provide flexible operations during energy and water shortages at fixed installations and forward locations. If a subsystem fails or is temporarily unavailable, other parts of the system will continue to operate at an acceptable level until full functionality is restored…. Implement integrated and distributed technologies and procedures to ensure critical systems remain operational in the face of disruptive events…. Advance the capability for systems, installations, personnel, and units to respond to unforeseen disruptions and quickly recover while continuing critical activities.

We will seek to use multi-fuel platforms and infrastructure that can provide flexible operations during energy and water shortages at fixed installations and forward locations. If a subsystem fails or is temporarily unavailable, other parts of the system will continue to operate at an acceptable level until full functionality is restored…. Implement integrated and distributed technologies and procedures to ensure critical systems remain operational in the face of disruptive events…. Advance the capability for systems, installations, personnel, and units to respond to unforeseen disruptions and quickly recover while continuing critical activities.

And despite numerous other examples across industry, academia, and the military, only a few locations, installations, or organizations across the Army embraced the notion of resilience for homeland operations. Installations were not considered a true “weapons system” and were left behind in the modernization process, creating a vulnerability that our enemies could exploit.

Installations are a flock of 156 pink flamingos wading around the beach of national security. They are vulnerable to disruption that would have a very real impact on readiness and the timely application of combat power. With the advance of technology-applications, these threats are not for the Army of tomorrow—they affect the Army today. Let us not get stranded in a Mainah Scenario.

Installations are a flock of 156 pink flamingos wading around the beach of national security. They are vulnerable to disruption that would have a very real impact on readiness and the timely application of combat power. With the advance of technology-applications, these threats are not for the Army of tomorrow—they affect the Army today. Let us not get stranded in a Mainah Scenario.

If you enjoyed this post, please also see Dr. Jason R. Dorvee‘s article entitled, “A modern Army needs modern installations.”

Dr. Jason R. Dorvee serves as the U.S. Army Engineer Research and Development Center’s liaison to the Office of the Assistant Secretary of the Army for Installations Energy and the Environment (ASA IE&E), where he is assisting with the Installations of the Future Initiative.

Mr. Richard G. Kidd IV serves as the Deputy Assistant Secretary of the Army for Strategic Integration, leading the strategic effort to examine options for future Army installations and the strategy development, resource requirements, and overall business transformation processes for the Office of the ASA IE&E.

Mr. John R. Thompson serves as the Strategic Planner, Office of the ASA IE&E, Strategic Integration.

If China were to succeed in realizing the full potential of quantum technology, the Chinese People’s Liberation Army (PLA) might have the capability to offset core pillars of U.S. military power on the future battlefield. Let’s imagine the worst-case (or, for China, best-case) scenarios.

If China were to succeed in realizing the full potential of quantum technology, the Chinese People’s Liberation Army (PLA) might have the capability to offset core pillars of U.S. military power on the future battlefield. Let’s imagine the worst-case (or, for China, best-case) scenarios.

Although there will be options available for ‘quantum-proof’ encryption, the use of public key cryptography could remain prevalent in older military and government information systems, such as legacy satellites. Moreover, any data previously collected while encrypted could be rapidly decrypted and exploited, exposing perhaps decades of sensitive information. Will the U.S. military and government take this potential security threat seriously enough to start the transition to quantum-resistant alternatives?

Although there will be options available for ‘quantum-proof’ encryption, the use of public key cryptography could remain prevalent in older military and government information systems, such as legacy satellites. Moreover, any data previously collected while encrypted could be rapidly decrypted and exploited, exposing perhaps decades of sensitive information. Will the U.S. military and government take this potential security threat seriously enough to start the transition to quantum-resistant alternatives?

At present, quantum computing, while approaching the symbolic milestone of “quantum supremacy,” faces a long road ahead, due to challenges of scaling and error correction.

At present, quantum computing, while approaching the symbolic milestone of “quantum supremacy,” faces a long road ahead, due to challenges of scaling and error correction.

As China challenges American leadership in

As China challenges American leadership in

1. Army of None: Autonomous Weapons and the Future of War, by Paul Scharre, Senior Fellow and Director of the Technology and National Security Program, Center for a New American Security.

1. Army of None: Autonomous Weapons and the Future of War, by Paul Scharre, Senior Fellow and Director of the Technology and National Security Program, Center for a New American Security.

5.

5.  6.

6.