[Editor’s Note: Earlier this year, COL Scott Shaw, then Commander of the Army’s Asymmetric Warfare Group, in an episode of Army Mad Scientist’s The Convergence podcast, described how the development of sensing technologies has made it increasingly challenging to hide on the battlefield. When combined with developments in artificial intelligence (AI) that will increase the tempo of warfare, it is likely that survivability moves will be required near-constantly. In a separate podcast episode, COL John Antal (USA-Ret.) described battlefield transparency and masking as two of the ten lessons learned from last year’s Second Nagorno-Karabakh conflict. Despite camouflage, Armenian command posts and air defense assets were easily targeted and destroyed. If you are sensed, you are targeted; and if targeted, you are destroyed or rendered inop. This makes masking essential for survival in the Future Battlespace.

Today’s post by guest blogger Chris Butler explores how randomness can be leveraged to mask patterns and avoid detection in a compelling story of future combat, imaginatively supplemented with excerpts from a nextgen Marine Corps Doctrinal Publication (MCDP), followed by an insightful analysis addressing why we will need to be less predictable and more random to avoid detection (and destruction) on future battlefields. Adversaries will exploit our inherent human patterns of training, indoctrination, and thinking with AI, machine learning, and a web of sensors. As these algorithmic tools proliferate, it will not be kinetic technology that wins future conflicts, but the ability to mask our decision making processes to survive and achieve battlefield dominance — Read on!]

“Pattern detected,” the voice chirped in Corporal 4356’s ear, also known as Bricks. He was being too obvious in his route. He blinked three times and a virtual D20 rolled in his HUD.

“12.”

He edged further along the wall and then ran directly to the left to the opposite side of the street. The mechanical mule silently followed his pattern ten feet behind him.

“Pattern detected. Recommendation: Cover in place.” He dropped into a crouch behind a dumpster. The display updated to show he has taken 9,344 steps so far today, almost at his 10,000 goal.

He felt his jacket constrict around his torso. The ground in front of Brick exploded into sparks.

“Hold!” the voice immediately stated firmly.

Thankfully, the angle was bad for the shooter due to his change in path.

An explosion took the side of a building about a block up and the gunfire stopped. He looked back at the mule’s smoking RPG.

“All clear!” said the voice and showed

================

MCDP 1-8

SSIC 03000 Operations & Readiness

Predictability

FORWARD

In this document you will find doctrine and standard operating procedures to avoid detection and keep you and your team alive.

If you remember watching Netflix when you were a kid, you know that the algorithm tries to learn about you. It then uses that information to predict what you like. Netflix as a military contractor is a much different organization now.

This guide is to help defeat the algorithms that have been adopted by forces worldwide from predicting where you will be and where you are going. If they can do this, they can and will use this information to target and kill you and your team.

Don’t be predictable.

================

Returning to Base Alpha wasn’t a comfort. A video message from Brick’s pregnant wife:

Returning to Base Alpha wasn’t a comfort. A video message from Brick’s pregnant wife:

“I can’t wait for you to come home! I don’t know what you did but a bonus pay dropped into our account. I bought some new baby shoes. Aren’t they cute?” They were.

Brick stepped into one of the airdropped server farms and meeting rooms. Swiping his badge as he passed, a summary screen of his performance reported:

“New 5 star rating from your manager! Avg. score: 4.68. Tip: Consider checking in verbally with your commander at your next convenience to build more rapport.”

Passing by a few terminals he saw a familiar message: “Battlespace predictiveness is high.”

“It looks like a lot of the battlespace modules are pointing fingers at each other, including cyber. Do we need to shuffle our playbook?” CO 23 looked out over the room of analysts.

Silence. You could hear a dice drop.

“We could increase the randomness a bit more,” said one analyst.

The screens at the front showed “please wait.” It might as well have been a magic eight ball being turned upright when a new list of COAs appeared.

Two were particularly high risk to Brick under the “Top picks for CO 23” section: “More sweeps for local compute,” “Late arrival of Platoon A,” and “Limited resupply of forward force.”

=================

MCDP 1-8

Predictability

CHAPTER 3

When you don’t have an Algorithmic Buddy

The hardest time is when tools that you depend on like your rifle or algorithmic buddy aren’t working. At this time you need to take it upon yourself to not be predictable.

There are a lot of reasons why you may not have access to an algorithmic buddy, such as EW jamming or equipment malfunctions. When disconnected, calculations will be limited without access to the Battlespace Edge…

===============

The route took the corporal adjacent to one of the last, heavily contested tungsten mines in the region. The chip manufacturing centers in Taiwan, China, and Vietnam didn’t like all the theft and lost shipments.

“Pattern detected.” He froze and threw himself down an alleyway. The mule hugged the outer wall of the building that he was walking along.

“Pattern detected.” He froze and threw himself down an alleyway. The mule hugged the outer wall of the building that he was walking along.

No sound or movement at all.

From his viewpoint he saw what looked like a large bundle of cables, probably communications related. It went from the building to the street and then disappeared below the street towards the mine.

“Oracle, I have visuals on wired comms. Please advise.” Nothing but static. He saw the red light showing a disconnected state. Possibly due to a jamming device that was nearby.

“Mule, cover the entrance to the alleyway,” he subvocalized.

“Copy.”

He carefully edged up the wall and towards a door that was cracked open to allow the cables to snake through. He couldn’t see inside the dark room.

“Pattern detected.”

“S#!+” he whispered to himself. He kept low to the ground while pushing open the door. He slowly entered the room, weapon drawn.

“Jeez, I can’t even surprise my Netflix account anymore.”

In a corner, there were three large monitors, a glowing keyboard cycling through rainbow colors, and a stack of communications equipment. The screen showed connection status for VPNs and an active jammer. A smaller window in one corner was showing a video. The scroll of green text on the screens illuminated other crates that filled the room.

In a corner, there were three large monitors, a glowing keyboard cycling through rainbow colors, and a stack of communications equipment. The screen showed connection status for VPNs and an active jammer. A smaller window in one corner was showing a video. The scroll of green text on the screens illuminated other crates that filled the room.

“F@#$^+& extremist weebs.” Brick walked up to the displays to read the text and watch the anime porn cycling in the corner.

“This must be how they are getting the extra compute. It’s a f@#$^+& hardline.” He pulled his ruck off his back and pulled out some wire cutters and a static bag for some of the components.

A webcam was capturing his entrance into the room. Somewhere, over seven thousand miles away in a West Virginia data center, various services were being spun up and killed in rapid succession to avoid being detected. Calculating and predicting.

“Pattern detected.”

=============

“Sir, we probably lost ‘Brute Force 3’ in that blast we just detected. His locator is now available after the jamming has stopped. No detectable movement patterns.”

“That explosion really did a number on the next steps,” another analyst pointed out. The main screen showed a set of plans with titles “Trending for the US Military” and “Continue executing for CO 23.”

“That explosion really did a number on the next steps,” another analyst pointed out. The main screen showed a set of plans with titles “Trending for the US Military” and “Continue executing for CO 23.”

The commander ordered, “Roll those bones again.”

=============

The Personalization of Warfare

We are creatures of habit. We generally take orders and we execute them based on training.

The training tells us how we should act and are expected to act. This is all very dangerous in a world that includes systems constantly seeking to detect and exploit patterns. With a world of commodified and distributed machine learning, deep learning, and artificial intelligence, it gets very dangerous.

The training tells us how we should act and are expected to act. This is all very dangerous in a world that includes systems constantly seeking to detect and exploit patterns. With a world of commodified and distributed machine learning, deep learning, and artificial intelligence, it gets very dangerous.

Future operators need to consider these biases, patterns, and their general predictability that can be exploited by our enemies.

When you get your recommendations from Netflix, it is looking at everything you have watched previously (or passed over) to create a profile. That profile is combined with many other peoples’ profiles to train models that will do a better job of offering you the next movie.

Today, these systems can predict our behavior in the near term with relative accuracy. As more data is collected, more specific  models are created, and more planning uses these systems, their prediction accuracy will continue to increase and their horizon will extend further into the future.

models are created, and more planning uses these systems, their prediction accuracy will continue to increase and their horizon will extend further into the future.

Now add to this adversarial relationships between two sides of a conflict, or one side of many in a complex ongoing interaction between multiple parties. Being “one step ahead of the enemy” in this world is predicting their next step. Just like they are trying to predict your next step. And the step that you think they are thinking. And so on… Everyone is trying to get inside of everyone else’s decision loop.

In adversarial situations, these systems will attempt to predict what you intend to do and use that knowledge against you. In the case of the Army, it will be used to predict what a Soldier is about to do and use that information to ambush or attack them to the enemy’s advantage.

In adversarial situations, these systems will attempt to predict what you intend to do and use that knowledge against you. In the case of the Army, it will be used to predict what a Soldier is about to do and use that information to ambush or attack them to the enemy’s advantage.

Patterns are Everywhere

When aided by systems that are constantly hunting to find a signal to exploit, we need to think about how predictable humans are. And we are very predictable! Your identity can be predicted within 95% of accuracy based on anonymized information. We are full of patterns.

Virtual reality systems like the Oculus use an algorithm to detect where your head will be a few microseconds ahead of when it will be there to  facilitate lower latency in rendering. This is necessary to provide the user with an experience that doesn’t cause nausea. Even our head movements are predictable when playing high intensity games.

facilitate lower latency in rendering. This is necessary to provide the user with an experience that doesn’t cause nausea. Even our head movements are predictable when playing high intensity games.

What many Soldiers would call “situational awareness” is simply a set of patterns that they are taught to understand and take action on. As we know with magicians and con artists, they can exploit those instincts and subvert our best defenses.

The logic of lethal exposure gives the advantage to the party that is more stealthy. There are many ways to be stealthy, including technological advancements. If a defending force can make an attacking force think it is somewhere when it really is somewhere else, the defending force can use that exposure imbalance to their advantage. With the tables turned, so does the benefit.

Getting out of the Loop

We can’t easily break out of the patterns we have. Daniel Kahneman, Nobel Prize winner and author of Thinking, Fast and Slow has said that getting out of your own way is hard. In a recent interview he said the following:

“It’s hard to change other people’s behavior. It’s very hard to change your own.”

Not only is it hard to break out of these patterns, but these patterns are reinforced by the fact that objectives are not easily hidden in conflict. Sometimes the right course of action is the one that seems most direct and likely.

Adversarial thinking uses this to its advantage. A key aspect of red teaming is to find holes in defenses and, more importantly, in your strategy. They do this by detecting and exploiting the patterns.

Adversarial thinking uses this to its advantage. A key aspect of red teaming is to find holes in defenses and, more importantly, in your strategy. They do this by detecting and exploiting the patterns.

In cryptography, an impossible code to break is one that employs a one-time-pad. This is because you can’t use pattern detection techniques to break them. In the end, you need to attack the most vulnerable part: humans.

This is why randomized patrols are the hardest to subvert. Randomness is a way out of the biases you are trapped in and a tool to avoid detection of patterns.

Adding more Randomness

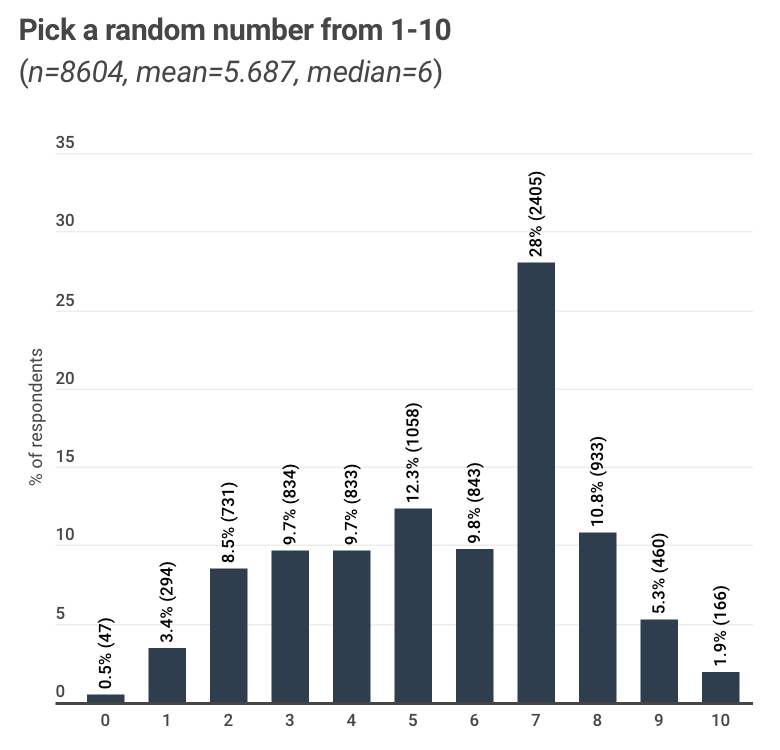

The problem is that humans are really bad at randomness. In fact, when we try to come up with random numbers, we do a horrible job.

Future operators will utilize tools to detect and subvert various patterns — to protect themselves and deceive their adversaries.

A very simple randomness technique has been used by poker players. When considering when to bluff, some players will check their watch and, based on where the second hand is, they will either bluff or not. This isn’t exactly random, but the timing ends up seemingly random, making it difficult for their opponents to discern a pattern.

The most obvious application for military operations would be at the tactical level: how do you use randomness to avoid ambush? How do you execute more effective patrols to catch clandestine elements?

This isn’t just for tactical thinking though, either. From an operational sense, where should you route supplies? From a strategic point of view, how should you vary your approaches to show strength (or feign weakness)?

Stochastic Today

As more data is collected, the cost of computing multiple options and multiple steps ahead becomes more costly. On the battlefield, an individual Soldier will not have access to the same compute power as that of someone deploying to a friendly cloud. There will be a trade off between pre-calculating plans with enough randomness based on more compute, or adjusting plans to be more random based on more limited resources.

The enemy may not always have the ability or means to predict, but could be supported by proxy via state and non-state actors with access to do the calculations.

Judgement isn’t just about mindlessly acting, but also acting towards the right goal. Even the goal is subjective or nuanced. Boyd has always talked about the three dimensions of conflict being physical, mental, and moral. Today’s militaries have learned valuable lessons about only focusing on the physical against insurgent forces.

Preparing for the Future

Over the next 15 years, nations will continue to increase the power of their respective militaries, but what will be most impactful will be how to direct them. We don’t need more creative thinkers to challenge assumptions or form another red team. We need to fight in a way that subverts the current patterns of warfare.

The Colonel Blotto game is a classic game theory example of this. You are given different battlefields in which you need to commit a certain number of Soldiers (or resources). Whichever side has the most resources in a battlefield wins. A side is a victor if they win the most fields.

Classically “might makes right” when you have a smaller set of battlefields. However, once you expand this to a very large set of locations, the underdog can start to win based on intelligent placement. In fact, knowing more about how your opponent will act allows you to act in a way they won’t expect. This is true for larger and smaller organizations.

In the end, our adversaries will exploit our patterns of training, indoctrination, and thinking with AI, machine learning, and other techniques. As these algorithmic tools become more available, an arms race will ensue. It will not be the kinetic technology that wins but the ability to hide your decision making processes.

To stay a step ahead, we need to be less predictable and be more random.

If you enjoyed this post, check out the following related content:

Nowhere to Hide: Information Exploitation and Sanitization

War Laid Bare and Decision in the 21st Century, by Matthew Ader

Battlefield sensing and AI discussions in The Future of Ground Warfare with COL Scott Shaw and associated podcast

Battlefield transparency and masking discussions in Top Attack: Lessons Learned from the Second Nagorno-Karabakh War and associated podcast

The Exploitation of our Biases through Improved Technology by Raechel Melling

Takeaways Learned about the Future of the AI Battlefield

Integrating Artificial Intelligence into Military Operations, by Dr. James Mancillas

Prediction Machines: The Simple Economics of Artificial Intelligence

Chris Butler is a product manager, writer, and speaker with over 20 years of product management leadership at Microsoft, Waze, KAYAK, Facebook Reality Labs, and Cognizant. Most recently he has been working through the application of adversarial mindsets to product development through decision forcing cases and wargaming. Learn more about Chris and his work through his Linkedin or Twitter accounts.