[Editor’s Note: In today’s post, the Mad Scientist Laboratory explores the future of teaming in an increasingly hyper-kinetic operational environment. How will Army teams form, train, deploy, and operate on future battlefields defined by machine-speed OPTEMPOs? And how will trust be established and maintained when you are no longer able to shed blood, sweat, and tears with every member of your team? Read on to explore the ramifications of man-machine teaming!]

The future operational environment will be characterized by speed. Speed will increase on the battlefield, in decision making, and in weapons systems impacting how future teams are created, managed, and led.

Future teams should be equipped to fight in a congested information environment where human judgment is incapable of contending with the pace of war fought at machine-speed. On the future battlefield, the information environment may dismantle trust, impacting data integrity and severely hindering all team formations including man-man, man-machine, and machine-machine. Weapons systems will evolve to execute faster targeting and engagement requiring fewer humans in the loop, which may influence how future teams think, plan, and execute.

Future teams should be equipped to fight in a congested information environment where human judgment is incapable of contending with the pace of war fought at machine-speed. On the future battlefield, the information environment may dismantle trust, impacting data integrity and severely hindering all team formations including man-man, man-machine, and machine-machine. Weapons systems will evolve to execute faster targeting and engagement requiring fewer humans in the loop, which may influence how future teams think, plan, and execute.

Future Warfighting Teams: Composition and Competencies

To effectively operate in the future operational environment, teams may need to assemble more quickly, adapt faster, and learn rapidly. With artificial intelligence systems executing machine-speed collection, collation, and analysis of battlefield information, warfighters and commanders will be able to focus on fighting and decision-making.

To effectively operate in the future operational environment, teams may need to assemble more quickly, adapt faster, and learn rapidly. With artificial intelligence systems executing machine-speed collection, collation, and analysis of battlefield information, warfighters and commanders will be able to focus on fighting and decision-making.

While there is no shared understanding of what teaming in the future will look like, future teams should be multidimensional, tailorable, and adaptable to operate in a disrupted, degraded, and denied operational environment with decentralized mission command.

The U.S. Army will need to consider team member stability when determining how to optimize team composition to fight and win in the future. Both static and dynamic teams have numerous advantages and disadvantages. A dynamic, cross-functional team will form quickly and disband based on mission requirements, which could drive innovation and creativity. Team leaders will need to quickly assemble, train, and deploy new teams to execute specific missions. However, cohesion and team performance could be adversely impacted. Static teams – who rotate in and out of operational areas together and are replaced by an entirely new team – might be easier to train and be more integrated. Nevertheless, this approach can result in a decline of team skills and an aversion to change. Moreover, static teams in operational environments that rotate as an entire team are continuously forced to relearn new environments and establish new relationships in the operational environment. Key questions include: How will the U.S. Army integrate artificial intelligence into team formations? Will machines be viewed as tools or teammates and will rotating members be humans or machines? How will the Army evaluate future team effectiveness?

The U.S. Army will need to consider team member stability when determining how to optimize team composition to fight and win in the future. Both static and dynamic teams have numerous advantages and disadvantages. A dynamic, cross-functional team will form quickly and disband based on mission requirements, which could drive innovation and creativity. Team leaders will need to quickly assemble, train, and deploy new teams to execute specific missions. However, cohesion and team performance could be adversely impacted. Static teams – who rotate in and out of operational areas together and are replaced by an entirely new team – might be easier to train and be more integrated. Nevertheless, this approach can result in a decline of team skills and an aversion to change. Moreover, static teams in operational environments that rotate as an entire team are continuously forced to relearn new environments and establish new relationships in the operational environment. Key questions include: How will the U.S. Army integrate artificial intelligence into team formations? Will machines be viewed as tools or teammates and will rotating members be humans or machines? How will the Army evaluate future team effectiveness?

The speed of technology will continue to drive further change in the operational environment, requiring future teams to cultivate technical, critical, creative, and cultural competencies. Individual Leaders and Soldiers may not have to possess specific technical expertise, but they should be able to understand technical capability to build trust and exploit technological innovation. For example,  deepfakes are becoming more sophisticated, and their proliferation may require critical thinking and a basic technological understanding to counter the disinformation they spread, but do not require hard technical expertise at the tactical level. Leaders and Warfighters will likely become disconnected on the battlefield, forcing subordinate Leaders and teams to make autonomous decisions, which might necessitate flattened hierarchy and optimally placed personnel. Future teams will need to be adaptive and employ creative thinking quickly in contested domains to assume the offensive and achieve overmatch on a disruptive, disconnected battlefield defined by speed and lethality. Teaming in the future will frequently include Joint, Interagency, and International teams, which requires a culturally astute, emotionally intelligent team that can empathize with and incorporate divergent views to harness the power of diversity, improve collaboration, and better inform decision-making.

deepfakes are becoming more sophisticated, and their proliferation may require critical thinking and a basic technological understanding to counter the disinformation they spread, but do not require hard technical expertise at the tactical level. Leaders and Warfighters will likely become disconnected on the battlefield, forcing subordinate Leaders and teams to make autonomous decisions, which might necessitate flattened hierarchy and optimally placed personnel. Future teams will need to be adaptive and employ creative thinking quickly in contested domains to assume the offensive and achieve overmatch on a disruptive, disconnected battlefield defined by speed and lethality. Teaming in the future will frequently include Joint, Interagency, and International teams, which requires a culturally astute, emotionally intelligent team that can empathize with and incorporate divergent views to harness the power of diversity, improve collaboration, and better inform decision-making.

Training teams will likely be significantly different in the future and include both virtual and live training. However, the standard Crawl – Walk – Run approach could help guide this. During the Crawl and Walk phases, teams can begin to learn skills, build relationships, and establish a foundation virtually. Moreover, this would reduce resource requirements and produce efficiencies by saving time, money, and enhancing team performance through increased repetition. The Run phase could be conducted through live training which will build trust, replicate battlefield conditions, and enable leaders to evaluate their teams prior to mission execution.

Training teams will likely be significantly different in the future and include both virtual and live training. However, the standard Crawl – Walk – Run approach could help guide this. During the Crawl and Walk phases, teams can begin to learn skills, build relationships, and establish a foundation virtually. Moreover, this would reduce resource requirements and produce efficiencies by saving time, money, and enhancing team performance through increased repetition. The Run phase could be conducted through live training which will build trust, replicate battlefield conditions, and enable leaders to evaluate their teams prior to mission execution.

Future Warfighting Teams: Vulnerabilities

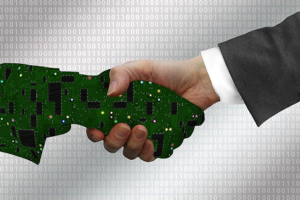

The evolution of teaming to include more machine partners reveals some critical vulnerabilities. The central issues concern trust, data integrity, and team cohesion, which are all inter-related. The Mad Scientist Laboratory has examined the issue of trust, finding that “a major barrier to the integration of robotic systems into Army formations is a lack of trust between humans and machines.” The cognitive load of the information environment of the future may be overwhelming for humans, necessitating AI and machines to process and analyze the situation and rapidly produce a response based on data input. In time-sensitive situations, operators may not be able to verify and test the

The evolution of teaming to include more machine partners reveals some critical vulnerabilities. The central issues concern trust, data integrity, and team cohesion, which are all inter-related. The Mad Scientist Laboratory has examined the issue of trust, finding that “a major barrier to the integration of robotic systems into Army formations is a lack of trust between humans and machines.” The cognitive load of the information environment of the future may be overwhelming for humans, necessitating AI and machines to process and analyze the situation and rapidly produce a response based on data input. In time-sensitive situations, operators may not be able to verify and test the  algorithm’s response, or even understand it. The Army may not have understandable AI and machines before its needs to use that technology, so humans may have to “blindly” trust that their machine teammates are correct without understanding how the algorithm arrived at that decision. This creates a paradox whereby humans are unable to fully trust the machines they are working with and yet are forced to rely on them to remain competitive in the future information environment.

algorithm’s response, or even understand it. The Army may not have understandable AI and machines before its needs to use that technology, so humans may have to “blindly” trust that their machine teammates are correct without understanding how the algorithm arrived at that decision. This creates a paradox whereby humans are unable to fully trust the machines they are working with and yet are forced to rely on them to remain competitive in the future information environment.

The key component for a machine to produce a practical and trustworthy decision is the input data. Inaccurate, incomplete, or inappropriate data inputs will result in poor, or worse, dangerous conclusions. This data also represents a critical area of opportunity for bad actors to infiltrate and corrupt it, either at the collection or storage phase. If data input in the beginning is faulty, and if the result is used as an input in the next machine, then

The key component for a machine to produce a practical and trustworthy decision is the input data. Inaccurate, incomplete, or inappropriate data inputs will result in poor, or worse, dangerous conclusions. This data also represents a critical area of opportunity for bad actors to infiltrate and corrupt it, either at the collection or storage phase. If data input in the beginning is faulty, and if the result is used as an input in the next machine, then  this creates a chain reaction of false outputs. Therefore, data integrity is paramount to the success of machine decision-making and incorporating machines into larger networks or teams. Investment in cyber security at all levels that handle data is necessary to protect the accuracy and authenticity of the information used to make potentially critical decisions.

this creates a chain reaction of false outputs. Therefore, data integrity is paramount to the success of machine decision-making and incorporating machines into larger networks or teams. Investment in cyber security at all levels that handle data is necessary to protect the accuracy and authenticity of the information used to make potentially critical decisions.

The cohesion, or lack thereof, of a future team may present its own set of challenges. Harkening back to the theme of trust, it may be difficult to convince a human team to fully “trust” a machine team member, particularly if they do not understand how it works or comes to its decisions. The concept of machine teammates may also hinder the development of trust because machines are as yet unable to convey human emotions, like empathy, or pick up on human nuances that living team members notice and adapt to. Conversely, and perhaps ironically, there is also the risk that humans begin to rely too much on their machine partners. In this case, the operators believe that the machine will always

The cohesion, or lack thereof, of a future team may present its own set of challenges. Harkening back to the theme of trust, it may be difficult to convince a human team to fully “trust” a machine team member, particularly if they do not understand how it works or comes to its decisions. The concept of machine teammates may also hinder the development of trust because machines are as yet unable to convey human emotions, like empathy, or pick up on human nuances that living team members notice and adapt to. Conversely, and perhaps ironically, there is also the risk that humans begin to rely too much on their machine partners. In this case, the operators believe that the machine will always  be right, and subsequently may neglect to apply human common sense to their outputs and follow through on algorithmic recommendations without verification. In the event that the data or algorithms did not function as intended and provided a poor output to human operators or another machine, there may be unfavorable results or real-life consequences.

be right, and subsequently may neglect to apply human common sense to their outputs and follow through on algorithmic recommendations without verification. In the event that the data or algorithms did not function as intended and provided a poor output to human operators or another machine, there may be unfavorable results or real-life consequences.

There are also undoubtedly “zero-day” vulnerabilities for teaming. What, if any, is the alternative to eventually relying on machine-generated decisions in the future operational environment? What effect will machine teammates have if their human partners are not able to trust or connect with them or neglect using them at all? Could a bad personal history with machine learning, AI, or other advanced technologies hamper the integration of machine teammates with humans?

Conclusion

The evolution of teaming is inevitable. The speed of the future operational environment will strain teams in several ways: condensing human OODA loops, necessitating dynamic and fluid teams with a variety of skillsets for ad hoc or  short-term assignments, and collecting and analyzing massive amounts of data. This evolution does reveal several considerable vulnerabilities as humans are forced to trust machines more often and for more impactful decisions, as data integrity becomes even more critical, and team cohesion dynamics could be threatened. Trust is the major vector for all of the vulnerabilities, and any corruption of this trust could be detrimental to both the functioning of the team and overall mission success.

short-term assignments, and collecting and analyzing massive amounts of data. This evolution does reveal several considerable vulnerabilities as humans are forced to trust machines more often and for more impactful decisions, as data integrity becomes even more critical, and team cohesion dynamics could be threatened. Trust is the major vector for all of the vulnerabilities, and any corruption of this trust could be detrimental to both the functioning of the team and overall mission success.

If you enjoyed this post, check out the following related posts:

Integrating Artificial Intelligence into Military Operations, by Dr. James Mancillas, as well as his complete report here

The Guy Behind the Guy: AI as the Indispensable Marshal, by Mr. Brady Moore and Mr. Chris Sauceda

AI Enhancing EI in War, by MAJ Vincent Dueñas

The Human Targeting Solution: An AI Story by CW3 Jesse R. Crifasi

An Appropriate Level of Trust…

… read Mad Scientist’s Crowdsourcing the Future of the AI Battlefield information paper;

… and peruse the Final Report from the Mad Scientist Robotics, Artificial Intelligence & Autonomy Conference, facilitated at Georgia Tech Research Institute (GTRI), 7-8 March 2017.

>>> REMINDER: The Mad Scientist Initiative will facilitate our next webinar on Tuesday, 18 August 2020 (1000-1100 EDT):

Future of Unmanned Ground Systems – featuring proclaimed Mad Scientist Dr. Alexander Kott, Chief Scientist and Senior Research Scientist – Cyber  Resiliency, ARL; Ms. Melanie Rovery, Editor, Unmanned Ground Systems at Janes; and proclaimed Mad Scientist Mr. Sam Bendett, Advisor, CNA.

Resiliency, ARL; Ms. Melanie Rovery, Editor, Unmanned Ground Systems at Janes; and proclaimed Mad Scientist Mr. Sam Bendett, Advisor, CNA.

In order to participate in this virtual event, you must first register here [via a non-DoD network].