(Editor’s Note: The Mad Scientist Laboratory is pleased to present the following guest blog post by Mr. Nick Marsella. If you are interested in submitting a guest post, please select “Guest Bloggers” from the menu above and review the submission instructions)

For more than a decade, I have been both an observer and participant in various efforts to examine the future [to include Mad Scientist events]. I have often been struck by how different people approach the task; describe their work [using such terms as “predicting, assessing, speculating, or forecasting” – pick your favorite]; and the varying degrees of rigor in their analysis. Secondly, I have been astounded by the volume and amount of research and publications devoted to the future from across the public and private sector – and how often we seem to “rediscover” the same insights (e.g., the world is increasingly becoming urban).1

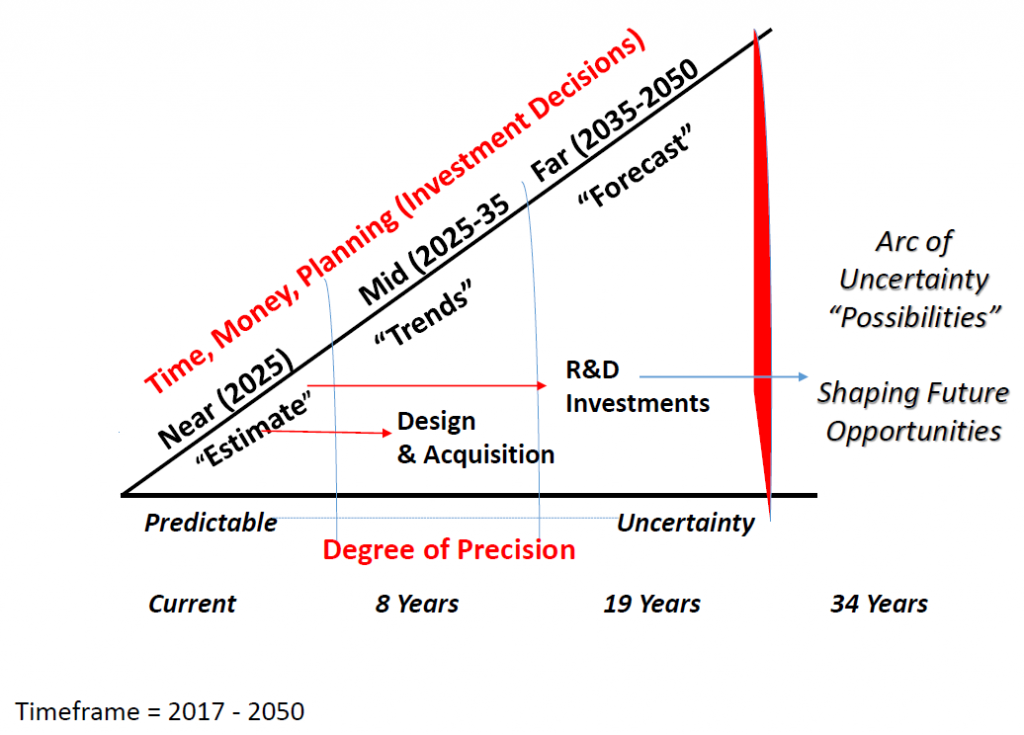

I suspect most people would agree with the statement that organizations must plan for the “near, mid, and far,” and the further the “far” is in the future increases the degree of uncertainty. As the chart below illustrates, “futures work” informs senior leader’s decisions on the design of strategies, organizations, and in the acquisition of technologies for the near and mid-term. While the “far future” increases uncertainty (and is often speculative), it informs leaders on where to invest resources for research and development of ideas and technologies – which in turn – helps shape the exploitation of future opportunities.

Unfortunately, futures work is not immune to three deadly sins, namely: estimates or forecasts of future developments can fall prey to cognitive bias; our work sometimes lacks clarity or sufficient specificity for the decision maker; and the work is not tied to solving a problem or to help shape the future of an organization.

While there are many cognitive challenges to futures work, a brief survey of the literature would indicate perhaps the most challenging are: hubris, lack of imagination/ paradigm blindness, trends faith, and mirror imaging.2

Hubris or overconfidence often translates into “this is the sole best solution or idea.” Hubris is also evident in overconfidence that the estimate or technology development will occur along a predictable time frame. One only needs to reflect on the cancellation of major programs, such as the Army’s Future Combat Systems (FCS), or to the catastrophic failures of two Space Shuttles, as illustrations that we sometimes place too much confidence in our ability to predict the rate of technology development or resilience of that technology. Among the solutions to hubris are: remain open-minded; maintain a healthy skeptical attitude; consult a friend or a devil’s advocate to help red team the idea; rigorously challenge assumptions; and look for disconfirming information. Keep in mind the old adage – “every new technology begets a new vulnerability.”

Paradigm blindness forces us to accept what we know as the answer at the expense of considering or exploring other options. We must continually re-examine our answers and options, which for some is as often as the historian Barbara Tuchman noted was “as rare as rubies in the backyard.” The oft cited quote from the New York Times editorial of 8 December 1903 illustrates this cognitive error: “A man carrying airplane will eventually be built, but only if mathematicians and engineers work steadily for the next ten million years.” Nine days later the Wright Brothers completed their first flight.

While the study of trends has many useful purposes and is a methodology often used by futurists, trends can be deceiving. As many have noted, the future is unknowable, and history – while valuable – is an imperfect guide.3 For example, it was a trend that the Chicago Cubs would never win the World Series – until they did. Similarly any “technology” is useful and will continue to develop until it is replaced by something better. The doubling of computer power every two years, known as Moore’s Law, is a trend that some think will conclude in the near future.

Thinking about the future is hard work, requiring us to continually examine the rigor associated with these efforts and avoiding the cognitive biases inherent in our future’s work.

We must balance imagination with realism. We must avoid the “sunk cost syndrome” – where we become afraid of killing off less productive research, projects, or investments, resulting in what one author noted, becoming “zombies” – absorbing resources – difficult to kill – taking on a life of their own.

As many senior leaders have noted, our prediction or assessment of the future will never be precise and totally accurate. We can only aspire not to be too wrong. While this is true and given that the future is always uncertain – our processes, mindsets, assumptions and actions should not add to the uncertainty.

Nick Marsella is a retired Army Colonel and is currently a Department of the Army civilian serving as the Devil’s Advocate/Red Team for Training and Doctrine Command.

Part II of this blog post will examine the purposes and why futures work and efforts [like Mad Scientists] are important and help to inform senior leaders about the future and to help drive informed decisions.

__________________________________

1 Duplication is not necessarily bad, since the work may provide insights from different vantage points or perspectives and repetition of findings over time may add creditability to them and their conclusions. Yet, all too often, efforts within an organization continue to rediscover the same insights over multiple years – resulting in continual admiration of a problem. In my view, “rediscovery” often results from a failure from doing a comprehensive literature review, which includes identification of insights or lessons already identified.

2 This is not to dismiss other challenges such as confirmation bias, poor qualitative or quantitative methodologies – among many others – resulting in invalid conclusions. See the National Research Council of the National Academies report, Persistent Forecasting of Disruptive Technologies(2010), or the many Defense Science Board reports on future technology development and red teaming.

3 Grey, C.S. (2015). Executive Summary. Thucydides was Right: Defining the Future Threat. Strategic Studies Institute and U.S. Army War College Press. Summary can be found here.

The views expressed are the author’s and do not reflect the official position of the Department of Defense, Department of the Army, or Training and Doctrine Command.

I recently listened to a book that discussed sustaining technologies and discontinuous technologies. Large well managed corporations are very good at bringing sustaining technologies to the consumer. These include making cars more fuel efficient, increasing the number of mega pixels in digital cameras, etc. While large corporations are not good at bringing discontinuous technologies to the consumer. Examples of discontinuous technologies include digital vs film cameras, passenger air travel instead of passenger train travel, etc. The few large companies that were successful at brining discontinuous technologies to the consumer, did so by creating small branches that were separated from the work of the rest of the larger company. However most discontinuous technologies are brought to the consumer by small upstart companies.

As we look at the future of warfare the US military is very good at developing sustaining technologies, tanks, aircraft, ships, etc. Is the US military good at developing discontinuous technologies? The Capability Needs Analysis Process provides a solid foundation for prioritizing sustaining technologies, but is it the best process to justify investing in discontinuous technologies?

Many discontinuous technologies start out far inferior to their possible competitors, an example is the digital camera was initially inferior to film cameras. As a niche market develops and money is invested in research and development the discontinuous technology surpasses the sustaining technology, giving the company that brought the discontinuous technology a significant advantage over their competition.. An example is the Apple Ipod vs the Sony Walkman. Small companies invest in discontinuous technologies because they can not compete with the resources large companies invest in sustaining technologies.

What budget constrained nations are using discontinuous technologies to compete with the US, China, Russia, Iran, etc.? How does the US military leverage the knowledge gained by the budget constrained nations to develop discontinuous technologies and their associated concepts?

Remembering that FCS (originally named the Objective Force) was most likely influenced by President Bush’s “leap forward” in technology it briefed well but in actual wrench turning it failed because, in part, technology wasn’t there and the lack of oversight of the first year or two of the FCS program’s funding gave anyone who was really looking the death knell aspect. I was working the UAS side (the original six UAS in an FCS construct–four in the FCS BCT and two from above that later became one) in 2001-2003 and gladly handed that off from the HQDA G8/FDI office to the HQDA G8/FDV (Aviation) who seemed to not understand that all things UAS in the Army (at that time) was intelligence money funded.

But I digress. The blog hits some very good points and examples. From a strategic look over the Wright Brothers and SGT York Gun, examples of “lets see if we can do this” the aspect of failing has to consider responsibility and oversight. Investing in RTD&E after S&T aspects are looked into supports a better approach than the SGT York gun which failed not only in function, but in how it would fit into the overall scheme fo the Force (a supercharged engine in an M48 tank chassis with an F-16 radar…what could go wrong? And this was the winning design over the competitor which was the liberty ADA system that was on an M1 tank hull and had two Bushmaster 25mm cannons and 8 Liberty SAMs–sound familiar? Go look up the Russian 2S6 for an idea of what it would have looked like). The Army was moving to a higher mobility force (the Big 5) and how anyone thought an M48 tank (something my dad used in Vietnam in the late 1968s) with an unproven radar (unproven on a ground vehicle) was a good fit into a force is someone who probably excelled as a used car sales man. Pretty sure the thought was “if the Soviets can do it (with the ZSU 23-4) we can too.”

But back to the strategic level and a quote that fits perfectly attributed to Charles H. Duell, Commissioner of US Patent Office in 1899. Mr. Duell’s most famous attributed utterance is that “everything that can be invented has been invented.” Four years later the Wright Brother’s go flying (and previous to that there had been a lot of work on flight http://mentalfloss.com/article/16814/who-flew-wright-brothers). Nearly 120 years later one wonders what Mr. Duell would say about that.

Addressing the surprise attack on Pearl Harbor lets not forget several other factors. I would disagree that the US was mirror imaging the Japanese Empire. After all, why cut off steel and other resources if you think an empire that had been stoked by presidents from Teddy Roosevelt on to expand and control requiring key resources they didn’t have wouldn’t cause them to go on the offensive? In fact War Plan Orange was specifically focused on the Japanese with the US fighting them alone and that plan was developed in 1924 during the inter-war years. Additionally, lets not forget that the US and its military were far more racist than they are today. Japan could never beat the US, could never have better fighters at the onset, and their military troops could never out perform Americans. After all, who would have believe, as of December 6, 1941, that a Japanese Imperial carrier strike group of 6 carriers could get from Japan, to Hawaii, and attack not once but twice and in a complete surprise to the fleet and Army in Hawaii?

Primitive radar was in operation on Oahu and picked up the approaching Japanese aircraft, however, the detection was dismissed because of a lack of C2 structure, faith in new technology, and the coincidence of a flight of B-17s coming in at the same time *the radar crew that detected the Japanese planes only did because the truck to take them to lunch was late).

Finally, taking SGT York gun and other aspects (like faulty craftsman ship from the lowest bidders in basic equipment from Vietnam era M-16s to unsealed M577 extension tents–you could see light through the holes where the stitching was meaning any chemical attack by Saddam would have killed everyone inside Battalion and Brigade TOCs) like the discontinuity of the goals for military (mission accomplishment) and those supplying them with equipment (civilian corporations and vendors focused on profit accomplishment) have to be considered.

This is an attribute few understand until they retire and go work outside the military. The military and DOD spends money to ensure our troopers live to fight another day. Manufacturers need money to both run a production line while keeping the stockholders happen with profits. Simply watch a C-SPAN broadcast when a Congressperson is, once again, railing at the military over why a program costs so much (when the same congressperson voted to cut what was bought failing to understand that the production line is for a set amount of things and if you cut them, what is produced rises in cost–simply look at the Zumwalt class destroyer; originally 48 were to have been built, nut that lets cut to 3 so no wonder the ships cost so much).

Finally, while there is a hubris aspect related to a requirement’s solution, there is also a “need to fail” aspect. Identifying where the knee in the curve of returns on your investment related to that has to be war-gamed out before, not during or after someone changes the requirement completely (see: Army Aerial Common Sensor aircraft that kept changing the requirement AFTER the vendor was chosen and aircraft were under construction).

The Book “The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail” refers to these as “disruptive” technologies. I Highly recommend it. It provides some very interesting case studies of how new technologies (which started out as inferior to established solutions) eventually surpassed them to the detriment of many well established corporations.

Hi there, just wanted to mention, I loved this post.

It was hеlрful. Keeρ on posting!

I think this website holds some really superb information for everyone. “I prefer the wicked rather than the foolish. The wicked sometimes rest.” by Alexandre Dumas.

Hubris or overconfidence often translates into “this is the sole best solution or idea.” Hubris is also evident in overconfidence that the estimate or technology development will occur along a predictable time frame. One only needs to reflect on the cancellation of major programs, such as the Army’s Future Combat Systems (FCS), or to the catastrophic failures of two Space Shuttles, as illustrations that we sometimes place too much confidence in our ability to predict the rate of technology development or resilience of that technology. Among the solutions to hubris are: remain open-minded; maintain a healthy skeptical attitude; consult a friend or a devil’s advocate to help red team the idea; rigorously challenge assumptions; and look for disconfirming information. Keep in mind the old adage – “every new technology begets a new vulnerability.”

I am very happy with this blog. I am surely gonna share it with my friends and followers.